Today is an exciting day at Pure Storage! Holding our 1st User Conference: Pure//Accelerate; announcing new products: FlashArray//m10 and FlashBlade; along with several new solutions. One of the solutions that I am very passionate about is our new Pure Storage® All-Flash Cloud for Microsoft Azure offering. The Pure Storage All-Flash Cloud with Microsoft Azure provides a cloud solution that allows enterprises to easily connect and create a secure, scalable, and on-demand infrastructure using Microsoft Azure services with the performance and resiliency of the Pure Storage FlashArray platform. The solution uses a high-speed, proprietary Microsoft Azure ExpressRoute connection with the Equinix Cloud Exchange to connect virtual machine services with co-located Pure Storage FlashArray equipment at an Equinix data center.

The diagram below illustrates the high-level connectivity components.

The Pure Storage All-Flash Cloud with Microsoft Azure can be managed with th Portal or Windows PowerShell to manage and develop for extensive scripts using the Open Connect for Microsoft Azure. Each mechanism below describes from the least complex to the most extensible.

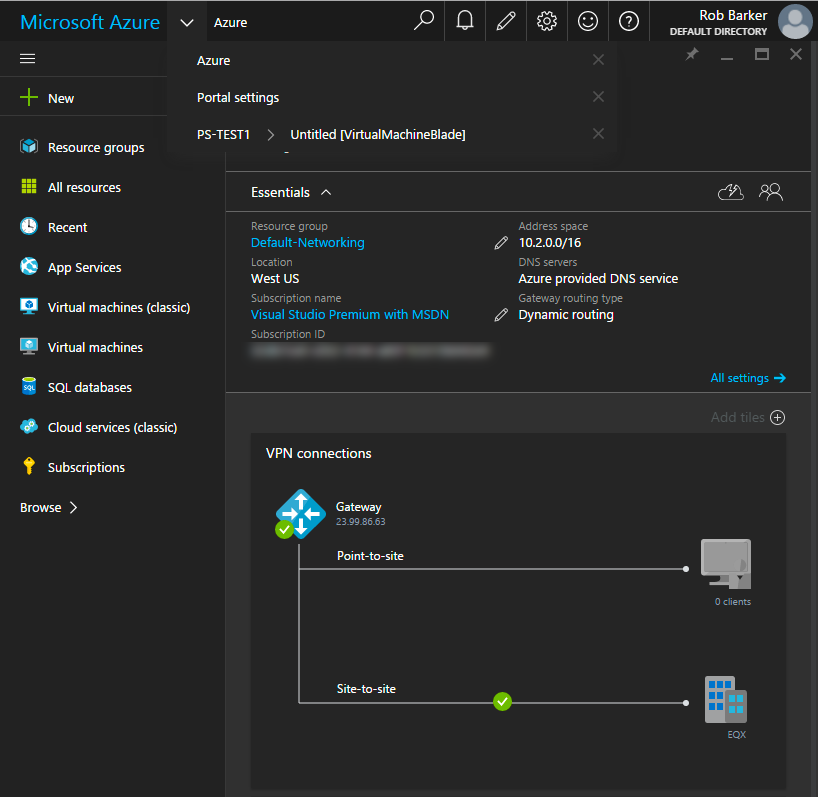

Microsoft Portal – The Azure portal can be used to create and manage all services. The diagram below illustrates the Resource Manager portal view of the Virtual Network connection that has been established between the cloud and the Equinix data center.

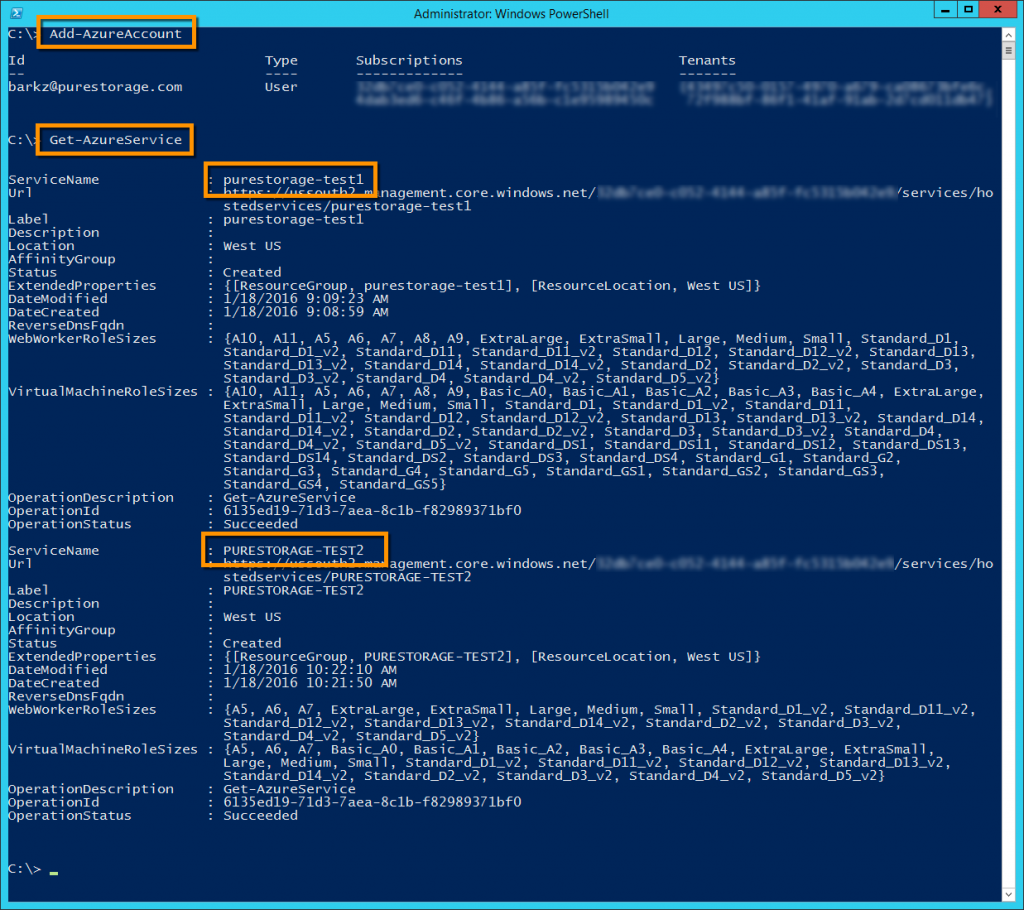

Windows PowerShell – Provide full management through the use of the cmdlets which are part of the Azure PowerShell Module. The below screenshot shows adding an Azure account to the session using Add-AzureAccount and then query for the configured services under the account. Illustrated below is the Virtual Network connection between the Azure Cloud via ExpressRoute to the Equinix Cloud Exchange where the Pure Storage FlashArray is co-located. The FlashArray is connected via redundant 1GB/s iSCSI connections.

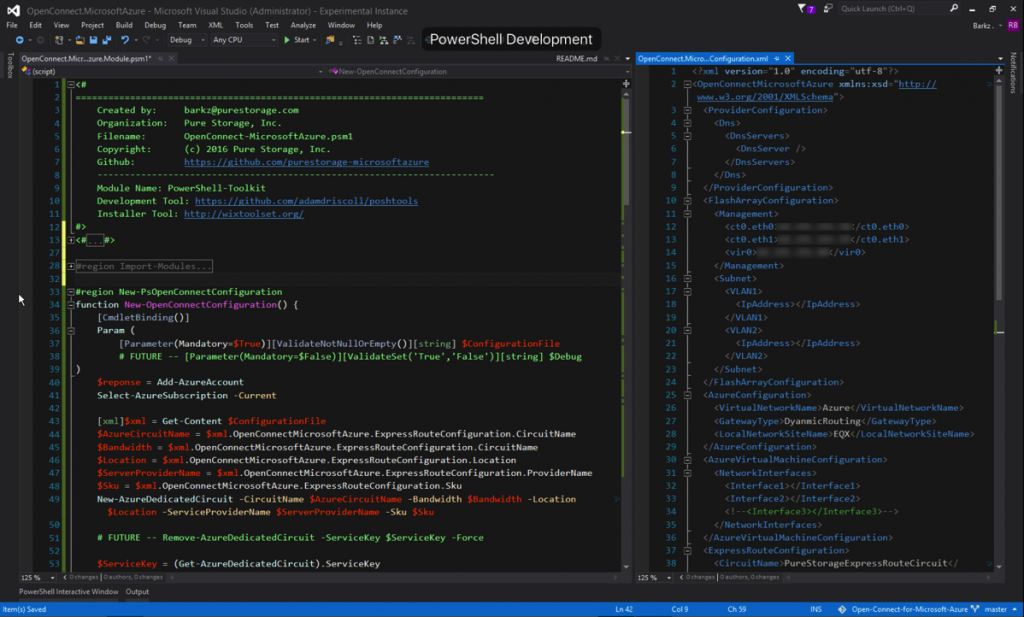

The Azure PowerShell package includes two modules that are used, Azure and ExpressRoute. Each of these modules contains specific cmdlets to setup the different components for connecting the Azure Cloud to the Equinix Cloud Exchange. One of the value propositions for the Pure Storage All-Flash Cloud for Microsoft Azure is the automation of connectivity between Pure Storage systems and Azure via ExpressRoute. This is done with a community developed Pure Storage Open Connect for Microsoft Azure PowerShell module. Delete: “accompanying PowerShell module appropriately named Pure Storage Open Connect for Microsoft Azure. This new PowerShell module provides wrapper cmdlets around the required Azure cmdlets for setting up the different components for ExpressRoute and the cloud.

Below is an example of the new PowerShell module shown in Visual Studio 2015. The new module is implemented as a Script Module for easy extensibility and customization. Instead of scripting out each cmdlet with the various parameters a custom configuration XML file has been created so customers and partners can enter the information and just pass in this XML file to configure the different components.

The connection that was tested as part of the solution validation between the Azure cloud and the Equinix Cloud Exchange was 1GB/s (10GB/s speeds can be used). The primary use case highlighted in this paper was for Dev/Test using Microsoft SQL Server 2014. There were several tests performed using diskspd and a TPC-E like benchmarking tool. Using the database that was populated as part of the TPC-E like testing several FlashRecover snapshots were created and connected to different Azure virtual machines showing the ability to scale-out the Dev/Test compute resources on-demand. This is fully documented in the Configuration and Deployment Guide.

Below is an example workload using the TPC-E like benchmark which shows some basic performance measurements. This example illustrates sub-millisecond latency handling 75% Reads and 25% Writers.

Resource Downloads

- Pure Storage All-Flash Cloud for Microsoft

- Pure Storage Open Connect for Microsoft (GitHub)

Thanks,

Barkz