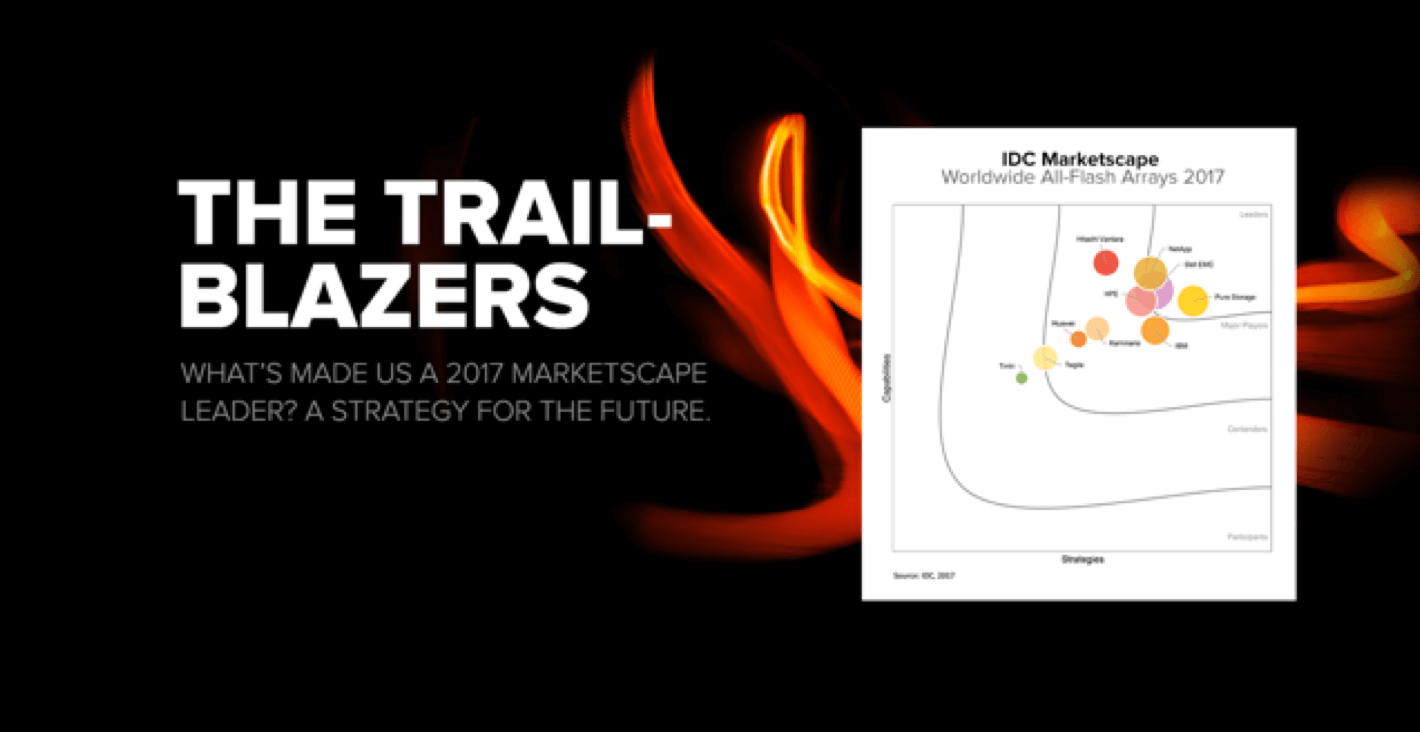

퓨어스토리지는 지난 한 해 올플래시 어레이 시장을 재정의하는데 주력해 왔습니다.

또한 클라우드 시대를 위한 플래시 어레이 제품인 비정형 데이터용 플래시블레이드(FlashBlade) 및 정형 데이터 워크로드용 100% NVMe 플래시어레이//X(FlashArray//X)를 출시했습니다.

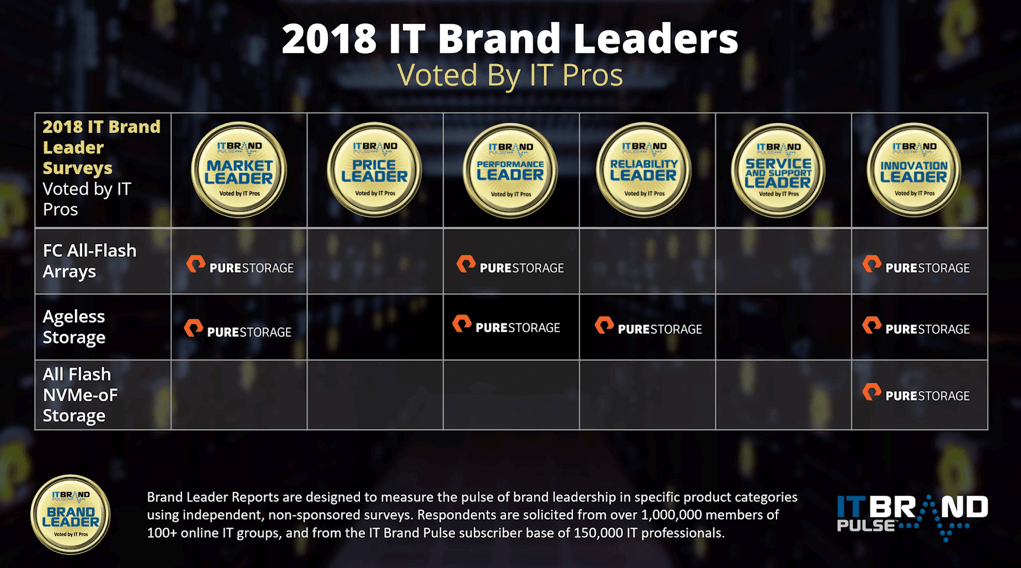

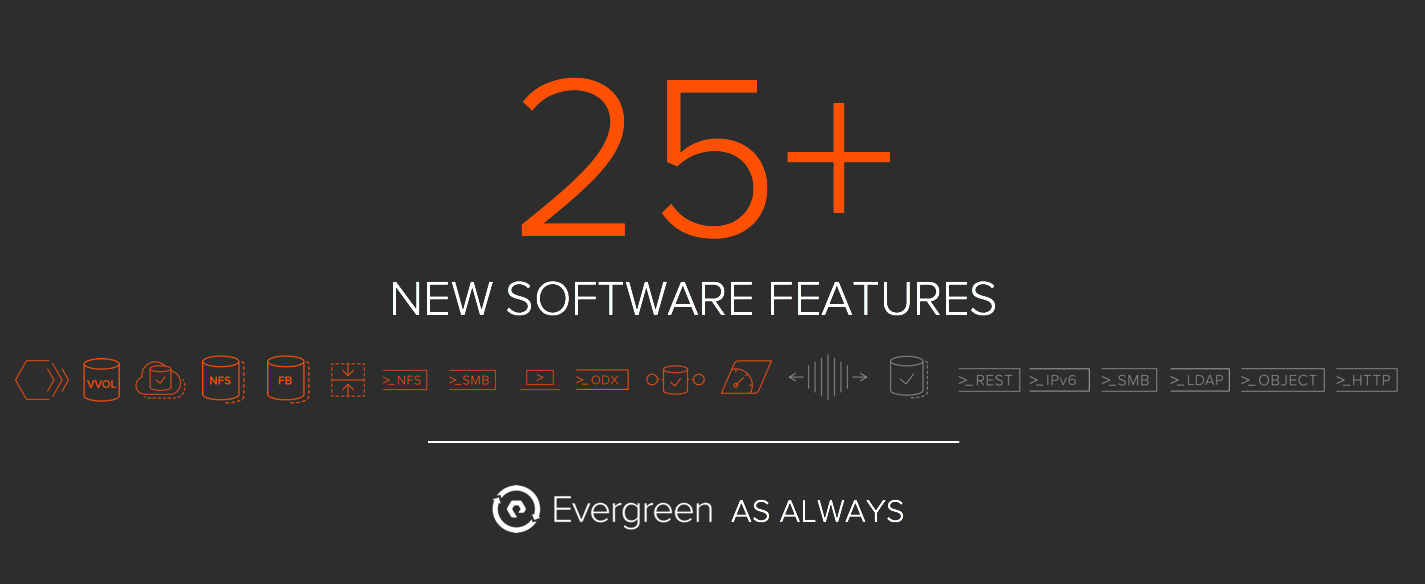

혁신을 가속화하는 이러한 올플래시 스토리지 플랫폼을 통해 퓨어스토리지의 엔지니어들은 25개가 넘는 새로운 기능을 추가하며 퓨어스토리지 설립 사상 최대 규모의 소프트웨어 출시 및 현대적인 클라우드 워크로드를 위한 티어1 스토리지 재정의, 네이티브 클라우드 통합, 플래시블레이드(FlashBlade) 규모 및 성능의 획기적인 향상, 빠른 속도의 오브젝트 지원, 셀프-드라이빙 스토리지에 대한 새로운 비전 실현 등을 통해 소프트웨어 역량을 한껏 발휘할 수 있는 기회를 얻었습니다.

새롭게 출시 된 퓨어스토리지의 이번 소프트웨어의 핵심은 퓨어스토리지의 대표적인 소프트웨어 퓨리티(Purity)의 주요 업데이트입니다. 플래시어레이 5.0(FlashArray 5.0)을 위한 퓨리티(Purity) 및 플래시블레이드 2.0(FlashBlade 2.0)을 위한 퓨리티(Purity)가 새롭게 발표되었습니다.

이번 글을 통해 공유드릴 사항이 많습니다.

퓨어스토리지만의 에버그린 스토리지(Evergreen Storage) 프로그램은 고객에게 다양한 혁신을 제공했습니다. 이 글은 10부작으로 된 시리즈의 첫 번째 글로서 퓨어스토리지의 신기능을 요약하고 새로운 기능을 보다 심층적으로 살펴볼 수 있는 기회를 제공합니다.

퓨어//액셀러레이트(Pure//Accelerate) 행사 개최 이후 후속 블로그 글을 통해 퓨어스토리지의 엔지니어 및 아키텍트들이 기술적인 측면에서 25개가 넘는 새로운 기능들에 대해 자세하게 설명할 예정입니다. 이번 블로그 시리즈가 여러분들에게 많은 도움이 되길 바랍니다!

클라우드 시대의 플래시: “올해의 소프트웨어” 출시 블로그 시리즈

- 퓨어스토리지 설립 사상 최대 규모의 혁신적인 소프트웨어 출시(이번 포스팅)

- 퓨리티(Purity) 액티브클러스터(ActiveCluster) – 모든 운영 환경에 적용 가능한 액티브-액티브 클러스터 솔루션

- 진정한 스케일 아웃 스토리지, 플래시블레이드(FlashBlade) – 5배 커진 용량, 5배 향상된 퍼포먼스

- 초고속 오브젝트 스토리지, 플래시블레이드(FLASHBLADE)

- 퓨리티(Purity) 클라우드스냅(CloudSnap) 기능을 활용한 네이티브 퍼블릭 클라우드 통합 방안

- VMware VVol의 간소화 – 클라우드에 최적화된 플래시 어레이에서의 VSPHERE 가상 볼륨 구축

- 퓨리티 런(Purity Run) – 내부 개발자를 위한 가상머신 및 컨테이너 구동을 위한 플래시어레이(FlashArray)

- 업계 혁신적인 NVMe 기술 도입, 그 이후는? 다이렉트플래시(DirectFlash) 쉘프 소개 및 NVMe/F 프리뷰

- 플래시어레이(FlashArray용 Windows File Services: 플래시어레이(FlashArray)에 완전한 SMB & NFS 탑재

- 다양한 업무 통합을 가능하게 하는 정책 기반 QoS

- 퓨어1 메타(Pure1 META): 셀프-드라이빙 스토리지를 가능하게 하는 퓨어스토리지의 AI 플랫폼

클라우드 시대를 위한 데이터 플랫폼

퓨어스토리지의 비전은 엔드-투-엔드 데이터 플랫폼을 통해 고객들이 플래시의 속도와 효율성으로 모든 데이터를 십분 활용할 수 있도록 하는 것입니다. 이를 위해 퓨어스토리지의 데이터 플랫폼은 기존 애플리케이션, 개발 및 테스트, 빅데이터 분석 및 현대의 웹스케일 앱을 구동하는데 필요한 매우 간단하고도 확장 가능한 블록, 파일 및 스토리지 서비스를 제공합니다.

또한 퓨어스토리지의 데이터 플랫폼은 퓨리티(Purity) 소프트웨어로 구동되며, 클라우드 시대의 플래시 어레이인 플래시어레이//X(FlashArray//X) 및 플래시블레이드(FlashBlade), 그리고 컨버지드 인프라인 플래시스택(FlashStack)으로 구성되어 있습니다. SaaS 기반의 관리 및 지원 스위트 퓨어1(Pure1)을 통해 관리되며, 퓨어1 메타(Pure1 Meta)의 AI로 더욱 끊임없이 스마트합니다.

최대 규모의 소프트웨어 발표와 함께, 퓨어스토리지는 3가지 중요 영역으로 비전을 확장했습니다.

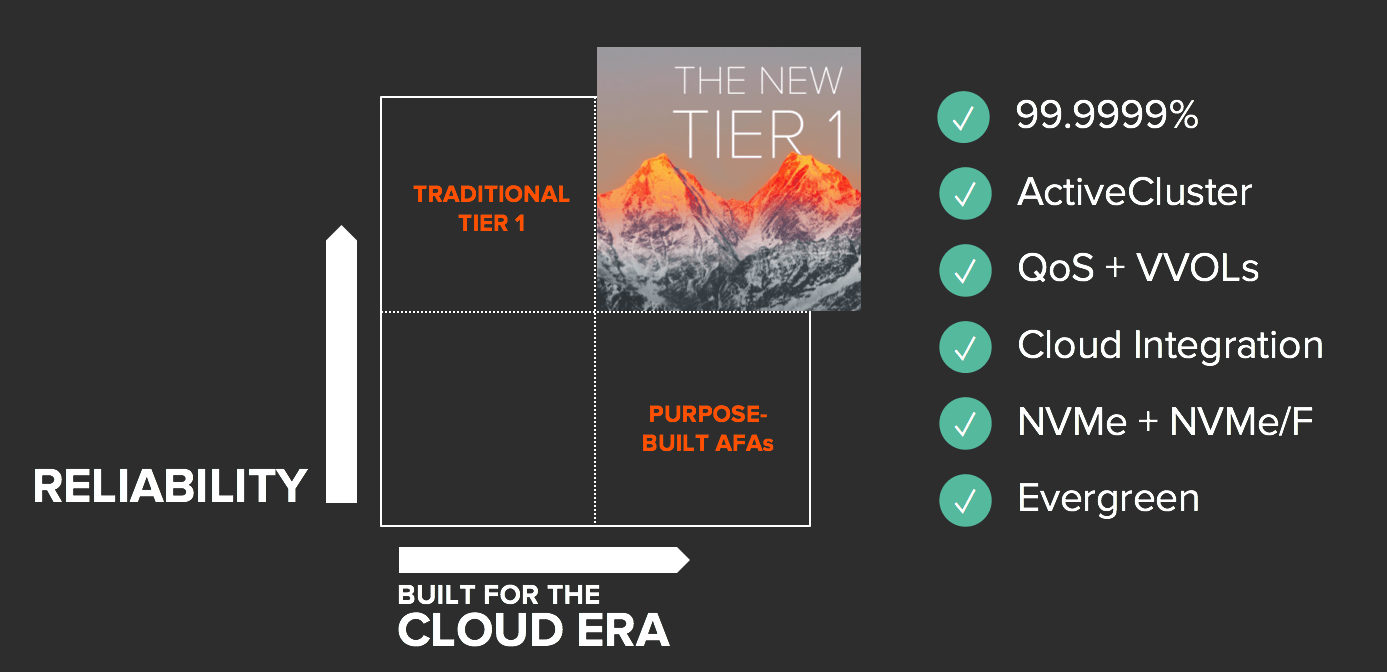

첫번째로 티어1 스토리지를 재정의하는 것에서부터 시작합니다. 최고 수준의 안정성을 제공하면서, 클라우드 시대를 위한 티어1 의 개념을 재고하고, 스토리지에서 안정성과 혁신 간의 타협을 종결시키는 것입니다.

두번째로 방대한 데이터 스토어로부터 통찰력을 확보하기 위해 실시간 분석, AI 및 머신러닝을 효과적으로 사용하는 방법을 배워, 어떻게 이를 통해 빅데이터를 통찰력으로 전환할지에 대해 비전을 제시하는 것입니다.

그리고 마지막으로 한층 진보된 AI 기술을 사용해 스토리지의 손쉬운 운영을 새로운 차원으로 끌어 올려, 퓨어스토리지의 간단함을 진정한 셀프-드라이빙 스토리지까지 확장하는 것입니다.

티어1 스토리지의 재정의

기존의 티어1 스토리지는 메트로 및 글로벌 복제 및 재해복구를 위해 99.9999%의 가용성 및 정교하고 복잡한 통합 옵션을 제공하며 안정성 측면을 가장 우선시 했습니다. 그러나, 고가에다 비효율적이고 복잡해서 빠른 혁신에는 적합하다고 볼 수 없었습니다.

이와 비교해 현대적인 올플래시 어레이는 데이터 절감, 간단함, 높은 성능, 그리고 보다 최근의 클라우드 통합 및 NVMe 기반의 플래시 혁신까지, 지난 5년간 클라우드 시대의 스토리지 혁신을 추진해왔습니다. 퓨어스토리지의 경쟁업체 제품은 고객이 고가용성과 현대적인 혁신 중 한 가지만 선택 하도록 하고 있습니다.

퓨어스토리지는 이렇게 선택을 강요하는 것이 옳지 않다고 생각합니다. 마침내 오늘, 퓨어스토리지는 타협을 거부하고 티어1 스토리지에 대한 기준을 새롭게 한 완전 통합 기능을 발표했습니다.

99.9999%의 가용성: 세대간 업그레이드 포함

티어1 스토리지는 안정성이 우선입니다.

그러므로 이 글에서도 안정성에 대한 이야기부터 시작해 보겠습니다. 플래시어레이//M(FlashArray//M)은 출시된 지 막 2년이 지났습니다. 플래시어레이//M (FlashArray//M)이 지난 2년 동안 99.9999%의 가용성을 달성했다는 소식을 자랑스러운 마음으로 전해드립니다.

뿐만 아니라, 플래시어레이(FlashArray)는 에버그린(Evergreen)을 통해 영속적으로 업그레이드를 제공합니다. 이러한 가용성은 스토리지가 정상적으로 운영될 때 뿐만아니라, 소프트웨어 및 하드웨어 업그레이드, 그리고 새롭게 출시된 100% NVMe 플래시어레이//X(FlashArray)//X)로의 업그레이드 같은 세대 간 업그레이드에도 보장됩니다.

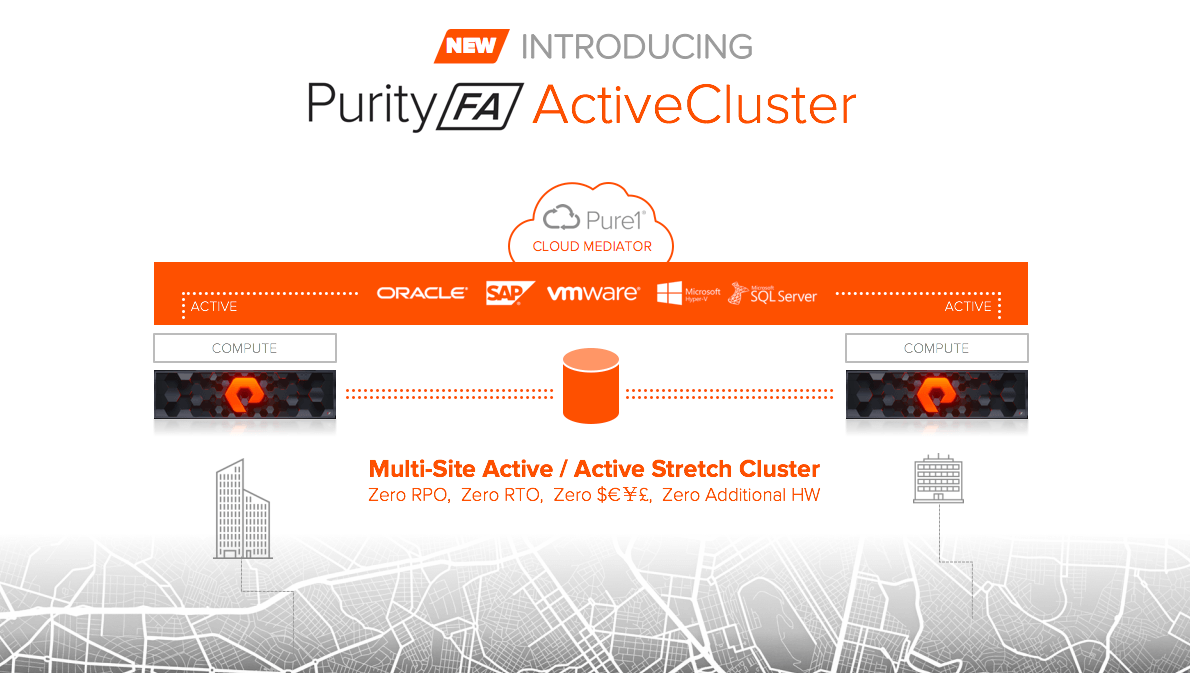

퓨리티(Purity) 액티브클러스터(ActiveCluster):

모든 것을 위한 진정한 액티브/액티브(active/active) 스트레치 클러스터

동기식 복제 및 메트로 클러스터링은 여전히 스토리지 안정성의 최고봉이라고 할 수 있지만, 비용 및 복잡성의 최고봉이기도 합니다. 오늘 퓨어스토리지는 퓨어스토리지만의 간단함을 갖춘 진정한 액티브/액티브(active/active) 스트레치 클러스터 솔루션인 액티브클러스터(ActiveCluster)를 출시했습니다. 액티브클러스터(ActiveCluster)는 퓨리티(Purity)에 완전하게 통합되어 에버그린(Evergreen) 업그레이드의 일부로서 추가 비용 없이 제공됩니다.

액티브클러스터(ActiveCluster)는 메트로 재해복구를 위한 간단함을 재정의합니다.

퓨어1(Pure1) SaaS를 통해 제공되는 클라우드 메디에이터(Cloud Mediator)를 이용하기 때문에 제3의 사이트는 필요하지 않습니다. 또한 간단한 4단계 절차를 통해 설정할 수 있으며, 하나의 명령만 퓨리티(Purity)에 추가하면 됩니다. 새로운 차원의 간단함이라고 할 수 있습니다! 액티브클러스터(ActiveCluster)의 간단함, 관리 및 3개 데이터센터 옵션에 대한 자세한 내용은 퓨어스토리지의 심층분석(Deep Dive) 블로그를 통해 확인할 수 있습니다.

정책 기반의 QoS: 안정적인 티어 통합

티어1 어레이는 통합을 위해 설계됐습니다. 플래시어레이(FlashArray)가 제공하는 효율성을 통해 다수의 스토리지 티어를 단일한 고집적도 및 고효율의 플래시어레이(FlashArray)로 통합하는 고객들이 늘고 있습니다. 그러나 모든 워크로드가 동일하게 생성되지는 않습니다. 워크로드 간의 경합이 발생할 땐 어떤 워크로드가 최고의 I/O 성능을 내도록 할지 구체적으로 지정해야 하는 경우가 있습니다.

QoS는 이러한 문제를 해결하기 위해 지난 10여년간 스토리지에 적용돼 왔지만, 복잡성 때문에 QoS 설정상의 오류가 발생하여 더 많은 문제를 야기하는 경우가 빈번하게 발생했습니다. 이에 퓨어스토리지는 일시적으로 스토리지 리소스를 과다하게 소모하여 전체 성능에 지장을 주는 애플리케이션 즉, ‘노이지 네이버(Noisy Neighbors)’로부터 시스템을 효율적으로 보호하기 위한 올웨이즈-온 QoS 기능을 퓨리티(Purity)에 도입했습니다. 그리고 이번에 새롭게 발표한 퓨리티(Purity) 버전부터 다양한 정책 기능을 통해 QoS가 한층 더 확장됐습니다.

이제 정책 기반의 QoS에 성능 등급을 설정할 수 있는 기능이 추가돼 금/은/동(Gold/Silver/Bronze) 워크로드를 손쉽게 차별화할 수 있습니다. 또한 정책 한계(Policy Limits) 기능이 볼륨을 기반으로 성능을 보다 세부적으로 제어할 수 있게 합니다. 이는 서비스 공급업체와 멀티테넌트 클라우드 구현에 적합합니다. 정책 QoS에 대한 자세한 내용은 심층분석(Deep Dive) 블로그를 참조하십시오.

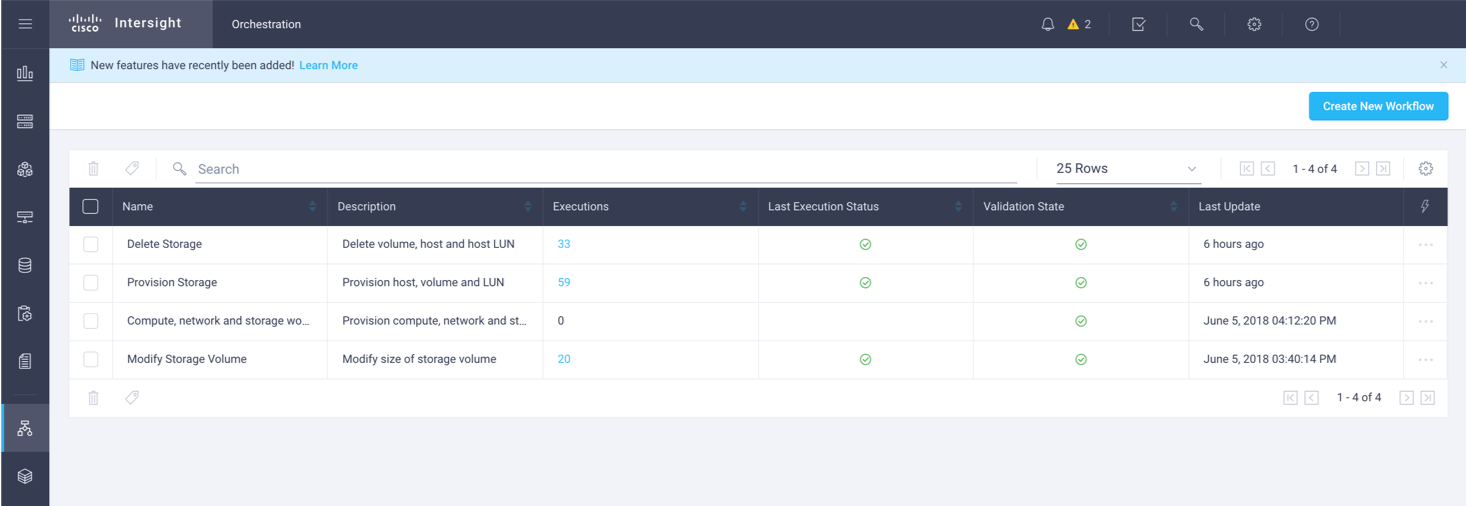

VMware VVOL: 손쉬운 클라우드 자동화

VVol과 VMware의 스토리지 정책 기반 관리(SPBM)는 vSphere 기반 클라우드 환경의 스토리지 관리를 대폭 향상시켜 줄 것으로 기대됩니다. VVol은 플래시어레이(FlashArray) 같은 스토리지 어레이에 각 VM별 가시성을 제공하여, 퓨어스토리지의 강력한 스냅샷, 복제, QoS, 마이그레이션 기술이 각 VM 수준에서 작동할 수 있도록 합니다.

이렇게 명확한 가치를 제공함에도, 시장에서 VVol의 수용은 더디게 이루어지고 있습니다. 이는 VVol에 대한 스토리지 업계의 열악한 지원 환경 때문입니다. 이제 퓨어스토리지에서 VVol을 경험해 볼 때입니다.

다시 한번 말씀 드리지만, 중요한 것은 간단함입니다.

퓨리티(Purity)에는 VASA Provider가 내장되어 VVol을 어레이에서 즉시 활성화해주며, 기본적으로 높은 가용성을 제공합니다. VVol은 5분 내에 쉽게 구현될 수 있으며, 플래시어레이(FlashArray)는 거의 즉각적으로 VMFS에서 VVol로의 마이그레이션을 수행합니다. VVol은 vSphere 내부 및 외부에서 VVol을 액세스할 수 있도록 지원할 수 있습니다. 보다 자세한 내용은 코디 호스터맨(Cody Hosterman)의 심층분석(Deep Dive) 블로그를 확인해보십시오.

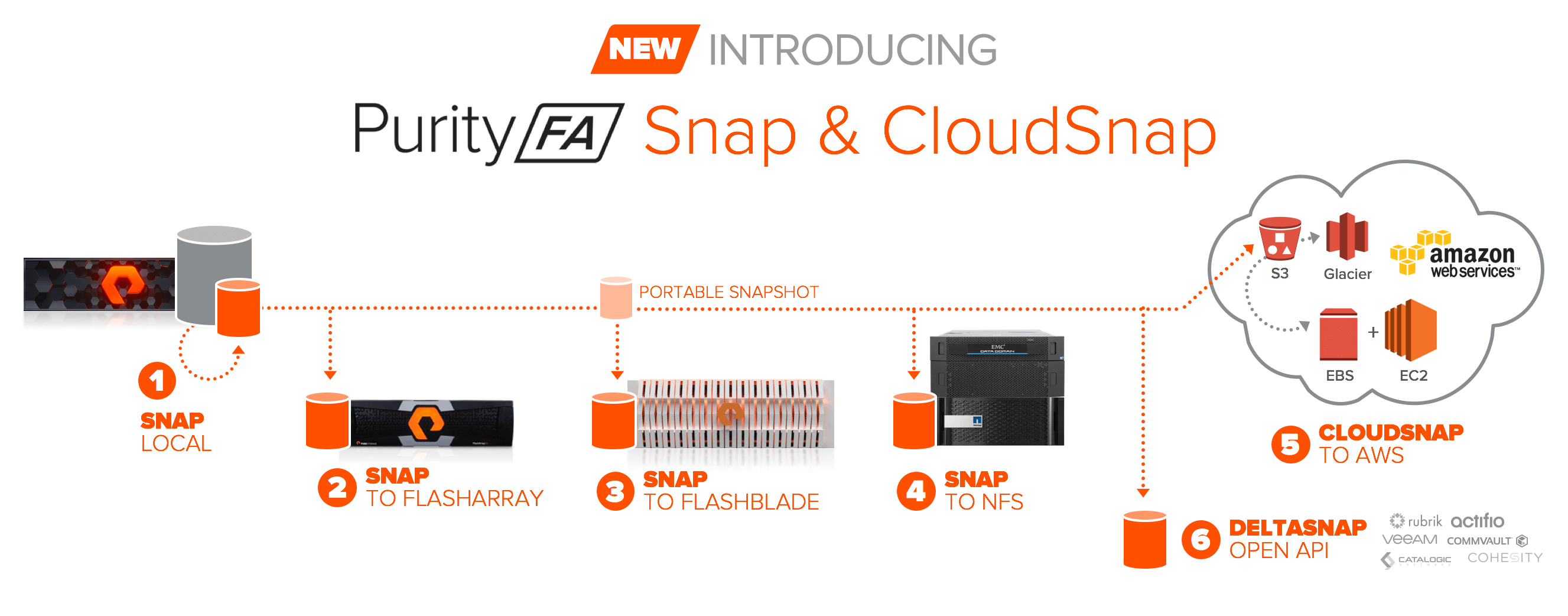

퓨리티(Purity) 클라우드스냅(CloudSnap):

퓨리티(Purity)의 스냅샷을 플래시블레이드(FlashBlade), NFS 및 퍼블릭 클라우드로 확장

이는 굉장한 일입니다! 퓨리티(Purity)는 고객들이 백업, 복구, 개발 및 테스트를 많이 활용할 수 있도록 지원하는 강력한 공간 절약형 스냅샷을 제공합니다. 또한 퓨리티(Purity)의 내장형 복제는 스냅샷이 재해복구에도 사용될 수 있도록 합니다. 그러나 많은 고객들은 저비용의 오프사이트 보존을 통해 퓨리티(Purity)의 스냅샷을 확장하도록 지원하는 기능을 요구해 왔습니다. 이제 퓨리티(Purity) 스냅샷은 플래시어레이(FlashArray) 외부에 위치한 새로운 목적지의 호스트로 원활하게 확장됩니다. 또한 퓨리티(Purity)의 보호 정책으로 모든 것이 완벽하게 관리될 수 있습니다.

이를 가능하게 만들기 위해 퓨리티(Purity) 스냅은 새로운 포터블(Portable) 스냅샷 포맷을 지원할 수 있도록 업데이트 됐습니다. 이 스냅샷은 복구 메타데이터에 포함됩니다. 스냅샷은 플래시블레이드(FlashBlade)뿐 아니라 구형 NetApp 파일러 또는 Data Domain 등 모든 일반적인 NFS 타겟으로 이동될 수 있습니다. 또한 효과적이고 원활한 관리 및 간단한 복구를 제공합니다. 이외에도 퓨어스토리지는 클라우드스냅(CloudSnap)을 출시했습니다.

클라우드스냅(CloudSnap)은 스냅샷을 퍼블릭 클라우드로 이동시켜 백업, 재해복구, 마이그레이션 및 개발에 사용할 수 있도록 합니다. 마지막으로, 퓨어스토리지는 개방형 DeltaSnap API를 개발했습니다. 이 API는 업계 최고의 이종 데이터 보호 공급업체들로 구성된 포괄적인 에코시스템에 퓨리티(Purity)를 기본적으로 통합하며, 공간 효율성을 유지하면서 스냅샷을 관리 및 이동할 수 있도록 지원합니다. 좋은 기능들이 매우 많아 이 글에 다 적을 수가 없습니다. 보다 자세한 내용은 심층분석(Deep Dive) 블로그를 참조하시기 바랍니다.

퓨리티 런(Purity Run) – 플래시어레이(FlashArray)에서 코드를 실행하는 개방형 플랫폼

퓨어스토리지는 퓨리티 런(Purity Run)을 하이퍼컨버지드 인프라로 명명하지 않지만, 여러분은 그럴 수 있습니다. 퓨리티 런(Purity Run)의 핵심은 고객이 자사의 웹스케일 아키텍처에 플래시어레이(FlashArray)를 새롭고 흥미로운 방식으로 통합할 수 있도록 해주는 개방형 개발 플랫폼을 생성해주는 것입니다. 고객들이 이 플랫폼을 사용해 무엇을 할 수 있을지 정말 기대됩니다!

애플리케이션이 최적화된 방식으로 플래시어레이(FlashArray)와 통신할 수 있도록, 맞춤형 스토리지/메시징 프로토콜을 구현하고자 한다면? 엣지 장치가 IoT 데이터를 홈으로 전송하기 전에 로컬 시스템에서 분석하려고 한다면? 보다 높은 성능을 위해 분석 마이크로서비스를 스토리지 바로 옆에 있는 데이터 파이프라인에서 실행하고자 한다면? 스토리지 어레이가 Docker/Kubernetes 환경에서 직접 구동돼 컨테이너를 자체적으로 호스트할 수 있다면?

퓨리티 런(Purity Run)을 통해 이 모든 것이 가능해집니다.

퓨리티 런(Purity Run)은 완전한 보안을 제공하고 성능을 격리하는 기능뿐 아니라, 전용 CPU 및 메모리 리소스 세트를 제공하여 맞춤형 VM 및 컨테이너를 고가용성 아키텍처상의 플래시어레이(FlashArray)에서 구동할 수 있도록 지원합니다. 개발자 여러분, 이제 플래시어레이(FlashArray)를 마음껏 사용하십시오! 퓨리티 런(Purity Run)에 대한 보다 자세한 내용은 심층분석(Deep Dive) 블로그를 통해 확인할 수 있습니다.

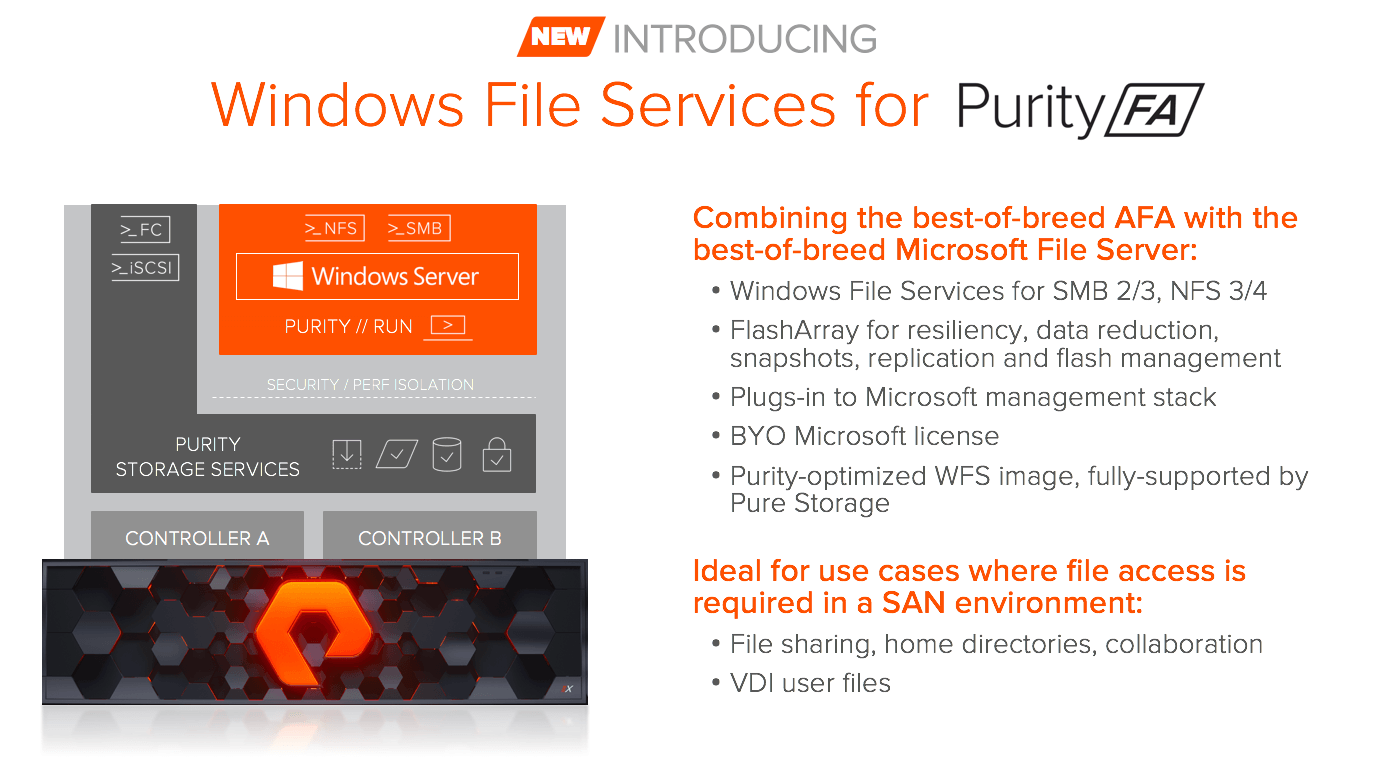

퓨리티(Purity)용 Windows File Services: 업계 최고의 서버 메시지 블록 및 NFC가 플래시어레이(FlashArray)로!

퓨리티 런(Purity Run)은 플래시어레이(FlashArray)를 폭넓게 확장할 수 있도록 합니다. 고객들은 플래시어레이(FlashArray)에 파일을 통합하는 것에 대해 자주 요청을 합니다. 고객들은 주로 플래시어레이(FlashArray) 시스템을 블록으로 구현하지만, 파일 형식의 데이터를 플래시어레이(FlashArray) 플랫폼에 통합할 것을 요구하기도 합니다.

시장 설문조사 결과, 가장 견고하고 간단하면서도 기능이 풍부한 서버 메시지 블록(SMB)을 구현한 것은 Windows File Server(WFS)였습니다. 그래서 Microsoft와 협력 관계를 맺고 WFS를 플래시어레이(FlashArray)에 결합했습니다.

퓨어스토리지의 대규모 NFS 제품은 플래시블레이드(FlashBlade)라는 사실을 명확히 해두겠습니다. 그리고 플래시블레이드(FlashBlade)의 업데이트에 대해 아래에서 좀 더 상세히 설명하도록 하겠습니다. 그러나 통합 구현의 경우 WFS는 성숙한 파일 서비스를 플래시어레이(FlashArray)에 추가해주며, Microsoft의 관리 에코시스템에도 부합합니다. 퓨리티(Purity)용 WFS는 퓨어스토리지를 통해 완전하게 지원되며, 파일 공유, 홈 디렉토리, 협업 및 VDI 사용자 파일을 위한 이상적인 솔루션입니다. 또한 기존 Microsoft Windows Server 라이선스 계약을 활용할 수도 있습니다. 퓨리티(Purity)용 WFS에 대한 자세한 내용은 심층분석(Deep Dive) 블로그를 참조하십시오.

퓨어스토리지의 NVMe 리더십 확장: 다이렉트플래시(DirectFlash) 쉘프 및 NVMe/F 프리뷰

퓨어스토리지는 지난달 플래시어레이//X(FlashArray//X) 및 소프트웨어 정의 다이렉트플래시(DirectFlash) 모듈을 통해 기업들의 주요 시스템 구축을 지원하는 업계 최초의 100% NVMe 올플래시 어레이를 발표하여 업계의 많은 관심을 모았습니다. 플래시어레이//X(FlashArray//X)를 둘러싼 관심은 상당히 뜨거웠습니다. 티어1 엔터프라이즈 워크로드의 성능 수준을 한 단계 더 높이 끌어 올렸을 뿐 아니라, ToR 아키텍처로 웹스케일 DAS(직접 연결 스토리지) 플래시 워크로드를 타겟으로 삼을 수 있게 해주기 때문입니다.

플래시어레이//X(FlashArray//X)를 출시한 후, 몇 가지 질문을 지속적으로 받았습니다. 어떻게 플래시어레이//X(FlashArray//X)를 섀시 밖으로 확장할 수 있을지, 그리고 어떻게 NVMe/F를 사용해 호스트에 연결할 수 있을지에 대한 내용이었습니다. 그 질문에 대한 답을 지금 해드리겠습니다.

먼저, 다이렉트플래시(DirectFlash) 쉘프가 출시될 예정입니다.

다이렉트플래시(DirectFlash) 쉘프는 내장된 NVMe/F 50Gb/초 이더넷을 통해, 퓨어스토리지의 NVMe 아키텍처를 플래시어레이//X(FlashArray//X) 섀시에 연결된 확장 쉘프로 확장해줍니다. 다이렉트플래시(DirectFlash) 쉘프는 18.3테라바이트 다이렉트플래시(DirectFlash) 모듈을 사용하는 경우 28개의 모듈을 단일한 3U 확장 섀시에 탑재해, 최대 512TB 및 가용 용량 1.5PB의 원시 플래시를 제공합니다.

고집적도란 바로 이를 두고 하는 말입니다! 다이렉트플래시(DirectFlash) 쉘프는 4분기에 출시될 예정이지만, 플래시어레이//X(FlashArray//X)를 통해 바로 오늘 NVMe 을 시작할 수 있습니다!

또한, 퓨어//액셀러레이트(Pure//Accelerate) 행사에서 Cisco와 엔드-투-엔드 NVMe를 공동 시연을 할 예정입니다. RoCEv2 프로토콜 및 NVMe-over-Fabrics를 사용해 초당 40Gb로 Cisco UCS 서버를 플래시어레이//X(FlashArray//X)로 연결합니다. 이는 서버에서 공유되는 플래시 칩까지의 ‘올’NVMe 경로를 제공합니다.

NVMe/F는 아직 초기 단계의 기술이기 때문에 퓨어스토리지는 출시 날짜를 확정하지 않았습니다. 그러나 NVMe/F가 현재 제대로 작동하고 있다는 것을 확인하실 수 있으실 것입니다.

계속 지켜봐주시기 바랍니다.

서버-DAS 플래시의 비용과 부담을 제거해주고 ToR 스토리지로 옮겨가는 이 조합에 클라우드 서비스 공급업체와 SaaS 고객들이 상당한 관심을 보였습니다. 이렇게 높은 수준의 집적도를 제공하는 랙을 통해 클라우드를 구축한다고 상상해보십시오. 애플리케이션이 필요로 하는 모든 확장 가능한 블록, 파일 및 오브젝트 서비스를 제공할 수 있습니다. 이미 이를 구현한 고객들도 있습니다.

새로운 티어1 구현!

여기까지가 새로운 티어1에 대한 퓨어스토리지의 비전입니다. 이는 미래의 클라우드 스케일 애플리케이션을 구축하고 기존 애플리케이션을 현대화하는데 필요한 모든 혁신을 제공하는 동시에 안정성 및 간단함을 새로운 차원으로 끌어 올리는 것입니다.

퓨어스토리지는 에버그린(Evergreen) 업그레이드를 통해 이러한 새로운 티어1의 모든 기능을 올해 안에 플래시어레이(FlashArray) 고객들에게 제공할 예정입니다. 추가 비용은 필요하지 않습니다. 이 기능들은 퓨리티//FA 5.0(Purity//FA 5.0) 이상의 모든 릴리즈에 탑재될 예정입니다. 이 기능들을 사용 가능한 시기에 관해서는 아래를 참조하십시오.

여기까지가 퓨어스토리지가 공유하는 전체 내용의 1/3에 해당합니다. 이 다음부터는 플래시블레이드(FlashBlade) 및 퓨어1(Pure1)에 대한 내용입니다.

빅데이터에서 통찰력까지

우리는 지금 데이터의 세계에서 가장 흥분되는 시기에 살고 있습니다. 대용량 데이터를 어떻게 활용하고 실시간으로 분석할 수 있는지를 막 배우기 시작하고 있는데, 어디선가 불쑥 AI와 머신러닝(ML) 기반의 접근방식이 나타나 인간의 인지 수준을 훌쩍 뛰어 넘는 가능성을 기약해주고 있습니다. 데이터의 통찰력이 제공하는 비즈니스 인사이트는 스토리지 크기, 속도 및 효율성 등 스토리지와 불가분하게 엮여 있습니다.

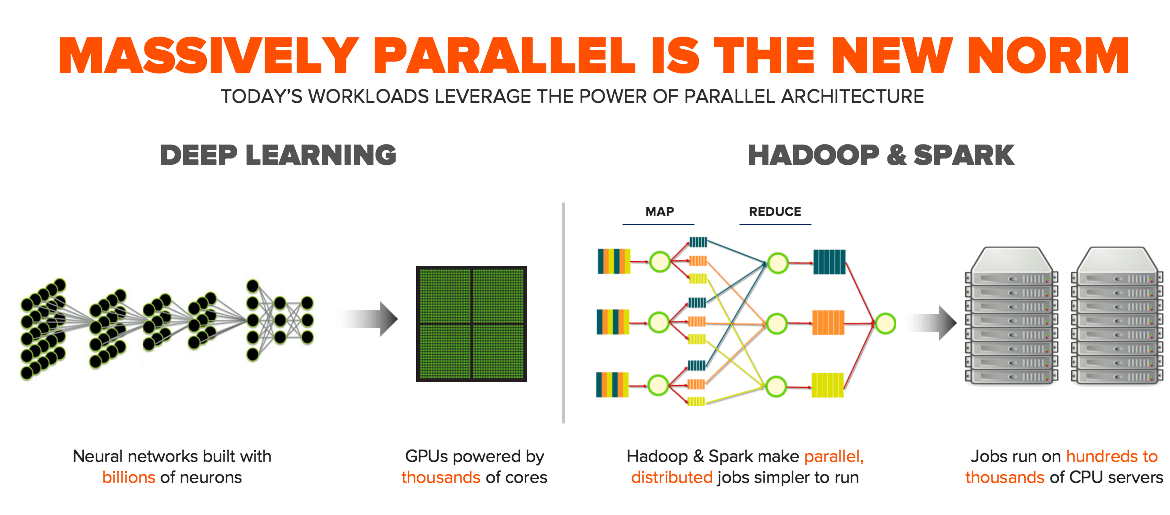

빅데이터와 통찰력의 새로운 세상에 관한 공통적인 견해가 있다면, 방대한 병렬성이 새로운 표준으로 자리매김했다는 것입니다.

새로운 데이터 애플리케이션은 방대한 병렬적 아키텍처에 구축돼 멀티 코어 CPU와 GPU의 혜택을 누리고 있지만, 이전 세대의 스토리지는 이를 제대로 지원할 수 없습니다. 퓨어스토리지가 플래시블레이드(FlashBlade)를 구축한 이유입니다.

작년 퓨어//액셀러레이트(Pure//Accelerate)에서 플래시블레이드(FlashBlade)를 출시한 후, 퓨어스토리지는 데이터의 잠재적 가능성을 바꾸어 놓았습니다. 정말 대단한 한 해를 보냈습니다. 플래시블레이드(FlashBlade)는 이제 AI 슈퍼컴퓨터를 구동하고, 초고속 레이싱카의 설계를 가능하게 하며, 방대한 웹스케일 아키텍처의 기반이 될 뿐 아니라, 차세대 비행기, 열차, 자동차 및 로켓을 설계, 시뮬레이션 및 구동할 수 있도록 지원합니다.

이러한 활용 사례에서는 스토리지의 보다 향상된 성능, 확장성 및 유연성이 요구됩니다. 그래서 오늘 퓨어스토리지는 플래시블레이드 2.0(FlashBlade 2.0) 및 주요 소프트웨어 업데이트를 발표하여 스스로 세운 기준을 뛰어넘고 있습니다.

75개의 블레이드로 확장: 5배 더 큰 용량, 5배 더 빠른 속도

기업들의 급속한 플래시블레이드(FlashBlade) 도입에 놀랐습니다. 흥미로운 활용 사례에 사용된다는 점뿐 아니라 고객들의 수요 및 요구사항이 부쩍 늘었다는데 놀랐습니다. 플래시블레이드 1.0(FlashBlade 1.0)은 15개의 블레이드까지 확장을 선형적으로 지원했는데, 이번에 출시된 2.0은 그 5배나 되는 75개 블레이드로의 선형적 확장을 지원합니다!

플래시블레이드(FlashBlade)는 ‘진정한’ 선형적 확장을 제공합니다.

블레이드를 추가하면 용량, I/O 성능, 메타데이터 성능 및 호스트 연결 대역폭이 향상됩니다. 그렇기 때문에 이번 2.0 릴리즈는 놀라운 수준으로 증가한 용량 및 성능 관련 수치를 기록하고 있습니다. 플래시블레이드 2.0(FlashBlade 2.0)은 75GB/초 읽기, 25GB/초 쓰기 및 750만 IOPS를 제공하며, 하나의 네임스페이스에서 8PB를 제공합니다.

지난해 제품 출시를 앞두고 퓨어스토리지는 1백만 NFS Ops를 약속했었습니다. 플래시블레이드 2.0(FlashBlade 2.0)은 지난해의 약속과 비교해 실제로 5배 이상 향상된 I/O 성능을 제공합니다. 소프트웨어 개선을 통해 올플래시의 성능을 초기 기대치를 넘는 수준으로 수평적으로 확장했습니다.

100Gb/초 속도의 통합 및 완전히 관리되는 소프트웨어 정의 패브릭이 이러한 새로운 수준의 올플래시 규모를 가능하게 합니다. 이러한 대규모 올플래시를 구동할 수 있는 수퍼사이즈의 랙이 없다고 걱정하실 필요가 없습니다. 15개 블레이드씩 결합한 섀시 5개를 사용해 표준 랙 마운트 구조로 구현이 가능합니다.

기억하십시오. 플래시블레이드(FlashBlade)는 간단하게 확장될 수 있습니다. 7개 블레이드에서 시작해서 한번에 블레이드 한 개씩 최대 75개 블레이드까지 확장될 수 있습니다. 75개 블레이드로의 확장에 대한 자세한 내용은 심층분석(Deep Dive) 블로그를 참조하십시오.

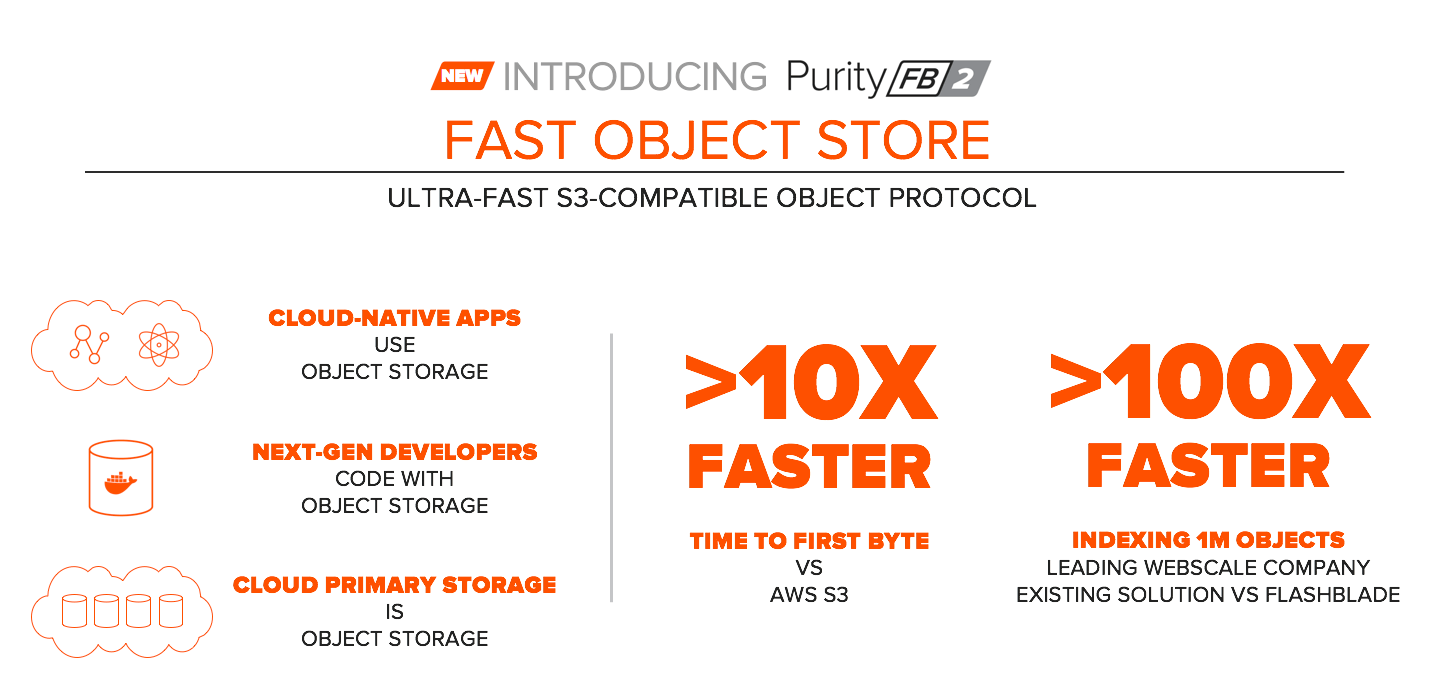

속도가 빠른 오브젝트: 미래의 웹스케일 애플리케이션 지원

클라우드 스토리지는 대부분 오브젝트 스토리지입니다. 퓨어스토리지는 차세대 개발자들에게 뭔가 설계하려면 “오브젝트를 우선적으로”으로 고려하도록 가르칩니다. 그러나 지금까지의 오브젝트 스토리지는 비용이 적게 들고 속도가 느린 활용 사례에 최적화되어 속도가 너무 느렸습니다. 이제 더 이상은 아닙니다. 플래시블레이드(FlashBlade)가 이제 빠른 속도의 오브젝트를 선보입니다. 이전과는 다른 새로운 고성능 데이터 및 클라우드 분야 활용 사례를 위한 준비가 됐습니다.

빠르다는 것은 정말 빠른 속도를 의미합니다. 클라우드 오브젝트 스토어보다 10배 이상 빠릅니다. 어떤 웹스케일 베타 고객의 경우 플래시블레이드(FlashBlade) 기반 오브젝트를 통해 온프레미스 디스크 기반 오브젝트 스토어와 비교해 100만 개의 이미지 오브젝트를 100배 빠른 속도로 인덱싱하고 있습니다.

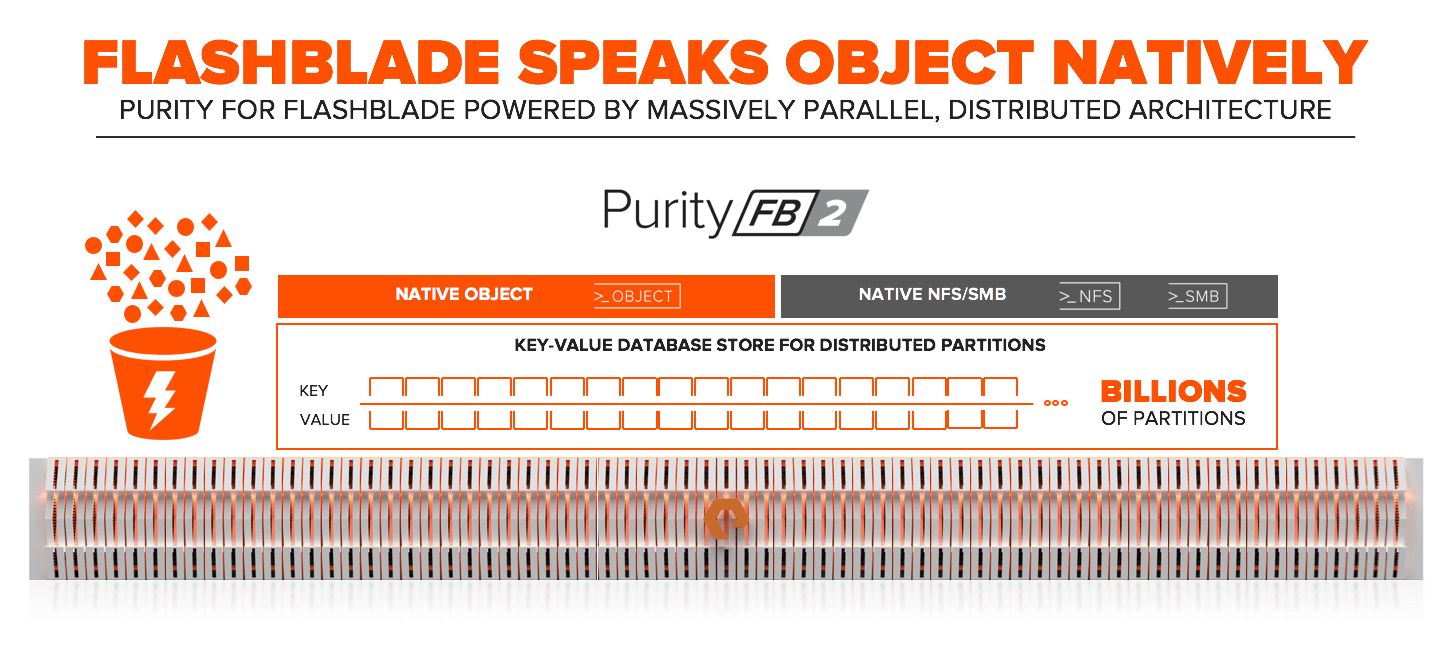

플래시블레이드(FlashBlade) 오브젝트가 빠른 이유 중 하나는 플래시블레이드(FlashBlade)가 말 그대로 오브젝트를 위해 설계됐기 때문입니다. 플래시블레이드(FlashBlade)는 최고의 확장성을 갖춘 백엔드의 오브젝트 스토어에 구축됐으며, NFS 및 SMB 파일 및 오브젝트 프로토콜이 단순 결합되는 것이 아니라 네이티브 오브젝트 스토어를 공유합니다.

개발자 여러분, 플래시블레이드(FlashBlade)는 여러분을 위한 스토리지입니다. 플래시블레이드(FlashBlade) 오브젝트에 대한 자세한 내용은 심층분석(Deep Dive) 블로그를 참조하십시오.

플래시블레이드(FlashBlade)의 활용 사례 확장:

지속적이며 안정적인 기능 및 새로운 중간 규모 블레이드 제공

앞서 말씀 드린 대규모 시뮬레이션, 분석, 웹스케일 오브젝트 및 AI/ML 활용 사례 외에도, 의료영상저장전송시스템(PACS), 대규모 소프트웨어 구현, 재무 분석, 데이터 웨어하우징, 미디어/게임 개발 및 심지어 경찰들이 사용하는 작은 휴대용 카메라까지, 플래시블레이드(FlashBlade)가 다양한 활용 사례에 도입돼 활용되고 있습니다.

플래시블레이드(FlashBlade)의 활용 사례를 확장하는데 가장 중요한 요소는 IT 관리자들이 기대하는 “엔터프라이즈” 기능입니다. 퓨리티//FB 2.0(Purity//FB 2.0) 릴리즈는 이러한 기능을 포함합니다.

네이티브 SMB 및 HTTP 지원은 프로토콜로까지 확장되며, IPv6는 현대적인 네트워킹을, NLM은 클러스터링된 애플리케이션에 대한 NFS 액세스를 가능하게 해줍니다. LDAP는 보안 관리를 간소화합니다. 가장 중요한 점은 스냅샷이 파일 시스템의 신속한 백업 및 복구를 지원한다는 것입니다.

5배의 규모, 5배의 성능, 속도가 빠른 오브젝트 및 엔터프라이즈 기능, 그것이 바로 플래시블레이드 2.0(FlashBlade 2.0)을 위한 퓨리티(Purity) 입니다.

셀프-드라이빙 스토리지

자율주행 자동차가 점점 현실로 다가오듯 우리 주변에서는 기술의 진보가 빠르게 진행되고 있습니다. 스토리지 업계에는 이러한 AI 기술을 스토리지에도 어떻게 적용할 수 있을까에 대한 비전이 존재하는데, 이는 매우 흥미롭습니다.

자율주행 자동차가 실현되기 위해서는 다음과 같은 세 가지 중요한 사항이 구현돼야 합니다.

- 자율주행에 대한 완전한 비전이 현실화되기 전에 먼저 안전 및 용이함을 위해 자율 기술을 활용해야 할 것입니다.

- 어려운 부분은 자동차를 구동하는 부분이 아니라, 세상을 감지하고 이해하여 내부적인 문제를 해결 및 예방하는 것입니다.

- 이는 전역적 데이터 문제입니다. 자동차 한 대가 아니라 수많은 자동차로 구성된 전역적 네트워크를 이해함으로써 가능해집니다. 그리고 이러한 정보를 서로 연결해야 자율주행이 한층 더 강력해질 수 있습니다. (교통 최적화를 생각해보십시오.)

퓨어스토리지의 셀프-드라이빙 스토리지는 이러한 세 가지 측면에서 자율주행 자동차와 명확한 공통점이 존재합니다.

먼저, 퓨어스토리지의 셀프-드라이빙 스토리지 개발은 한참 진척된 상태입니다. 처음부터 모든 것을 간소화하고, 사용자의 개입을 최소화하며, 어레이가 자체적으로 결정을 내릴 수 있게 만들었기 때문입니다.

두 번째로, 스토리지 관리자가 어려움을 토로하는 부분은 어레이 관리가 아닙니다. (적어도 퓨어스토리지 고객이라면 그렇습니다!) 어레이의 주변 세상 즉, 변화하는 예측 불가능한 워크로드를 처리하는 것이 문제입니다.

세 번째로, 퓨어스토리지는 세계적인 스토리지 공급업체로서 전체 사용자 기반에 걸쳐 워크로드를 확인하고 이해할 수 있는 역량을 보유하고 있습니다. 퓨어스토리지가 모든 사용자들의 워크로드를 학습하여 고객의 워크로드를 보다 잘 이해하고 최적화할 수 있을까요?

우리는 할 수 있다고 생각합니다.

이는 오늘 퓨어스토리지가 발표한 퓨어1 메타(Pure1 META)의 지원을 통해 가능합니다. 퓨어1 메타는 퓨어의 AI 엔진으로서 예측적 통찰력를 제공하여 스토리지를 더 잘 관리, 자동화 및 지원할 수 있습니다.

퓨어1 메타(Pure1 META)는 데이터로 시작하고, 데이터에 구축됩니다. 지난 몇 년 동안 퓨어스토리지는 IoT 기업으로서의 역할을 심각하게 받아들였습니다. 1천여 대의 어레이가 연결된 글로벌 네트워크를 구축했으며, 이를 통해 성능 및 운영 메타데이터를 퓨어스토리지로 전송해 왔습니다. 그리고 그 데이터를 고객이 유용하게 사용하며, 그 데이터를 통해 퓨어스토리지의 엔지니어링 팀이 보다 나은 제품을 구축할 수 있도록 지원하는데 많은 노력을 기울여 왔습니다. 퓨어1 메타(Pure1 META)는 이제 매일 1조개 이상의 데이터 포인트를 수집하여 퓨어스토리지의 데이터 레이크에 공급합니다.

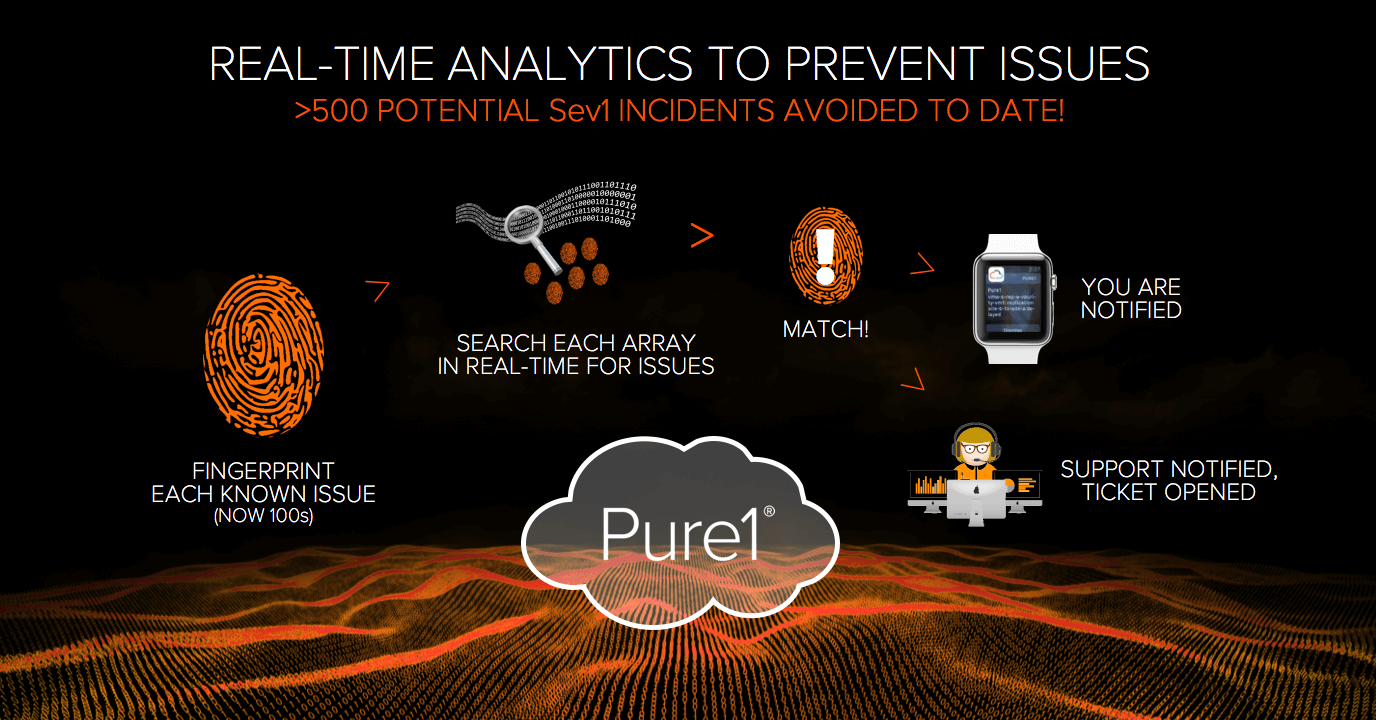

작년부터 퓨어스토리지는 글로벌 사용자 기반을 지속적으로 스캔하여 실시간으로 문제를 파악하는 ‘이슈 핑거프린트(Issue Fingerprints)’ 기반의 예측적 기술 지원을 제공하고 있습니다.

퓨어스토리지의 목표는 실질적인 장애가 발생하기 전에 먼저 업스트림에서 문제를 발견하고, 문제가 될 수 있는 변경 사항을 자동적으로 파악하는 것입니다. 예측적 기술 지원을 시작한 이래, 퓨어스토리지는 잠재적인 1단계 지원 500여 건을 파악하고 사전에 방지했습니다. 그리고 퓨어스토리지의 핑거프린트(Fingerprints)는 더욱 더 스마트해졌습니다.

현재 이 핑거프린트(Fingerprints)는 알려진 이슈에 기반하여 수동으로 생성됩니다. 퓨어1 메타(Pure1 META)의 AI 엔진은 머신러닝 기반 통찰력을 통해 문제를 발견하고 인간의 인지 수준을 넘어서는 이슈 및 데이터 간의 상관관계를 기반으로 핑거프린트(Fingerprints)를 생성할 수 있도록 지원합니다.

그러나 퓨어1 메타(Pure1 META)의 정말 흥미로운 부분은 용량 및 성능 측면에서 워크로드를 보다 잘 이해할 수 있게 된다는 것입니다. 이제까지는 어림 짐작으로 워크로드의 성능 규모를 산정해왔습니다.

성능 규모 산정은 거의 불가능합니다. 이해해야 하는 변수가 수천 여개 입니다. (IOPS, 대역폭, 응답시간, IO 혼합, 로컬 데이터 및 데이터 절감 등) 이는 인간이 할 수 없는 일입니다. 그래서 불필요하게 오버 사이즈 스토리지를 구축하여 비용을 과도하게 낭비하거나, 계산 착오로 언더 사이즈 스토리지를 구축해 성능 경합, 심지어 가동 중단이 발생했습니다.

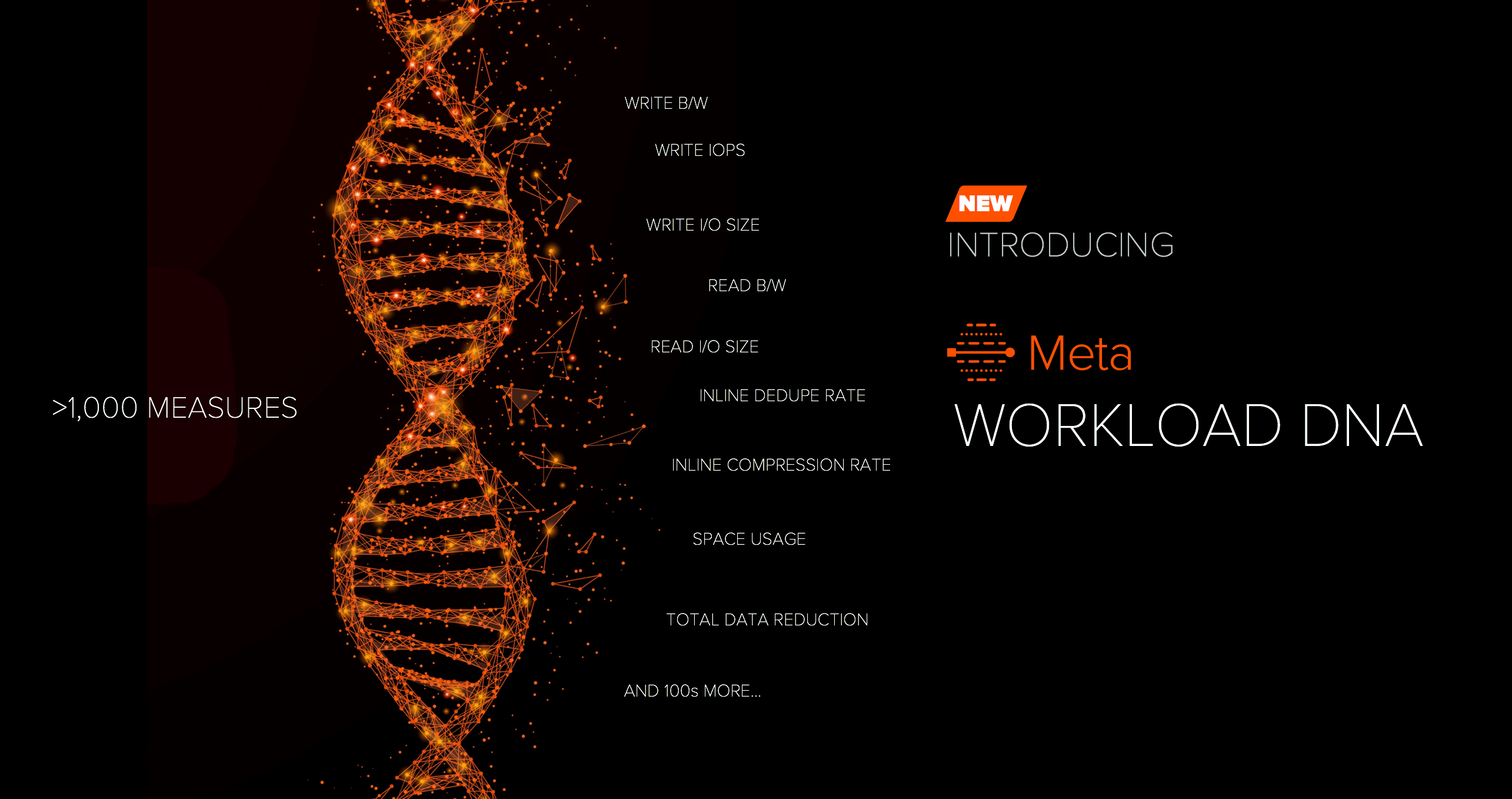

어레이에 얼마나 많은 특정 유형의 워크로드가 구동될 수 있을지 알고자 하려면, 어떻게 해야 할까요? 이는 머신러닝이 완벽하게 해결할 수 있는 문제입니다. 퓨어1 메타(Pure1 META)를 통해 워크로드 십만여 개가 포함된 데이터베이스 상에서 퓨어1(Pure1)에 수집된 모든 성능 데이터를 살펴봤습니다. 이를 통해 1천여 개의 성능 측정치 및 변수를 파악할 수 있었습니다. IOPS가 가장 중요할까? 대역폭? 아니면 응답시간? 퓨어1 메타(Pure1 META)는 이러한 변수를 모두 사용해 워크로드 성능 시그니처를 생성했습니다.

이것이 바로 ‘워크로드 DNA(Workload DNA)’의 컨셉입니다.

워크로드 DNA(Workload DNA)는 스토리지 어레이에 대한 다양한 워크로드의 적합성을 이해하는데 사용될 수 있습니다. 현재는 물론 향후 워크로드가 증가하는 경우에도 가능합니다. 어레이의 워크로드가 어떻게 증가할까? 향후 성능 또는 용량이 모자라지는 않을까? 새로운 워크로드가 어레이에 적합할까? 이러한 모든 질문에 대해 퓨어1 메타(Pure1 META)는 즉시 해답을 제공할 수 있습니다.

퓨어1 메타(Pure1 MET)는 퓨리티(Purity)의 운영 방식을 파악하며, 퓨어스토리지는 이를 다양한 방식으로 활용하고 있습니다. 가장 먼저 퓨어1(Pure1)의 새로운 툴인 워크로드 플래너(Workload Planner)에 활용했습니다.

워크로드 플래너(Workload Planner)의 간단한 UI만 보고 그 이면의 많은 기능을 간과하지 마십시오. 워크로드 플래너(Workload Planner)는 간단한 슬라이더 드래그 방식으로 워크로드의 증가율을 예측할 수 있습니다. 퓨어1 메타(Pure1 META)는 워크로드 DNA(Workload DNA)를 통해 각 워크로드를 이해하며, 퓨어스토리지의 글로벌 데이터베이스를 통해 정보를 얻어 성능 및 용량 증가율을 보다 정확하게 예측합니다. 퓨어1 메타(Pure1 META)는 매일 새로운 워크로드를 이해하면서 점점 더 스마트해집니다.

퓨어1 메타(Pure1 META)는 셀프-드라이빙 스토리지 여정을 초기부터 지원합니다.

워크로드를 진정으로 이해함으로써, 퓨어스토리지는 예측하고, 조치를 취하며, 최적화할 수 있습니다. 퓨어스토리지는 스토리지의 안정성 및 간단함을 향상시킬 수 있습니다.

글로벌 대시보드(Global Dashboard): 플래시어레이(FlashArray) 및 플래시블레이드(FlashBlade)에 대한 모든 정보를 단일한 뷰로 제공

퓨어스토리지는 퓨어1(Pure1) 데이터를 고객들이 유용하게 활용할 수 있도록 만들기 위해 지속적으로 노력하고 있습니다. 사용자 기반이 증가함에 따라, 고객들은 이제 수십 대, 심지어 수백 대의 퓨어스토리지 어레이을 구동하고 있습니다. 오늘 퓨어스토리지는 전체 올플래시 어레이에 걸쳐 용량 및 성능 정보를 종합해 전역적인 가시성을 제공해주는 새로운 글로벌 대시보드(Global Dashboard)를 발표했습니다.

모든 퓨어1(Pure1)과 마찬가지로 글로벌 대시보드(Global Dashboard)는 SaaS로 제공됩니다. 그렇기 때문에 퓨어1(Pure1)에 로그인하여 바로 사용할 수 있습니다.

요약 & 출시 시기

이제 이번 출시가 왜 퓨어스토리지 사상 최대 규모의 소프트웨어 출시인지 이해할 수 있으리라 생각합니다. 오늘 발표된 기능들은 앞으로 6개월에 걸쳐 퓨리티//FA 5.x(Purity//FA 5.x) 및 퓨리티//FB 2.x(Purity//FB 2.x)의 일부로 출시될 예정입니다. 이는 고객들이 주요 기능을 올해 안에 사용할 수 있게 된다는 것을 의미합니다. 모든 주요 기능 및 출시 예정일이 아래 그림에서 확인해 보십시오.

출시 일정 및 사양은 변경될 수 있으며, 일부 기능은 공식 출시 전에 일부 한정적으로 공개 될 수 있습니다.

이러한 퓨어스토리지의 여정에 동참해주신 모든 고객 여러분들께 감사의 말씀을 전합니다. 2017년뿐 아니라 앞으로도 계속 여러분과 협력하며 이 기능들을 개선해 나가고, 에버그린(Evergreen) 방식으로 민첩한 혁신을 지속해 나가겠습니다!