As a follow-on to the excellent blog post by Cody Hosterman on Reclaiming Windows Update Space in Windows 7, I decided to run some more in-depth VDI experiments using sDelete at a larger scale with Citrix XenDesktop Provisioning Services (PVS). I chose to test using this particular VDI technology as I’ve noticed in lab simulations that when user changes to the write-cache are set to discard at logout, that space is not automatically reclaimed at the array-level when the VDI desktop becomes available for a subsequent login by a new user. It should be noted that from the user’s standpoint, the amount of write-cache space available to them is consistent. Anyway, when you run Login VSI simulations that consist of several thousand desktops as we do on a consistent basis – cleaning up that dead space is very important in order to keep array utilization accurate. More in-depth results from those simulations can be found in our recently published XenDesktop 7.7 Design Guide found here.

With that being said, let’s take a look at one way to automate space reclamation on a Windows 7×64 XenDesktop PVS deployment. As always, we strongly recommend that you test this out in your own unique environment at a small scale prior to implementation.

In our lab environment, we stream the Windows 7×64 vDisk from a few PVS streaming servers. The write-cache itself is attached to the VM as a separate thin-provisioned vmdk when the Target Device is created with our VM template. In addition to the write-cache, we also redirect our page file and Windows Event logs to this disk (for this experiment we will refer to this disk interchangeably as the D:\ partition).

The easiest way we’ve found to implement this at scale has been via a Windows Scheduled Task. You can apply it either at the local vDisk/OS level or to the Active Directory OU via GPO where the PVS desktops reside. If other desktops are mixed in the same OU with the PVS desktops it would probably be the better bet to set this up only on your master template for consistency and to avoid any unintended issues on non PVS-based devices. In this test, we will be implementing sDelete via a local task scheduled on the streaming vDisk.

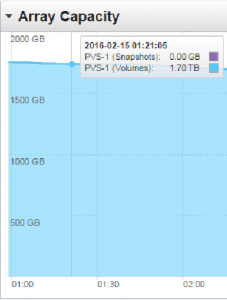

One really nice thing about our array is the ability to run several thousand VMs from a single datastore. For this test, we will run 2500 PVS-based desktops off of a single vDisk, and two PVS streaming servers all from a single datastore on a FlashArray//m20. After a week of running Login VSI simulations relatively often, we can see that this datastore has become fairly bloated with dead space below:

To implement sDelete, I first created a new version of the vDisk within the Provisioning Server Console and booted into it.

Next, I went into Windows Task Schedule via Control Panel > Administrative Tools > Task Scheduler.

Then, go down to Task Scheduler Library and select ‘Create Task’ by either right-clicking on the middle pane or selecting that option on the right pane.

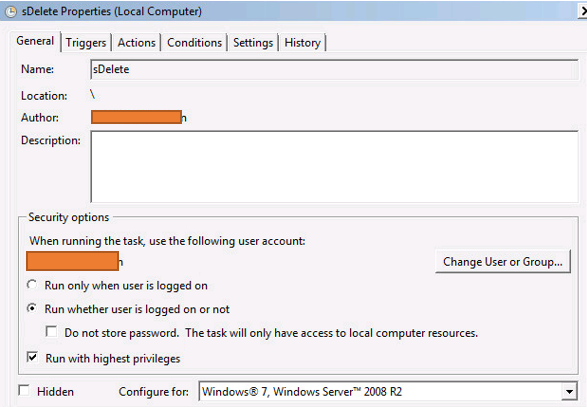

That should spawn the following window in which we entered the following (sensitive domain/username information was covered up):

We recommend using a custodial domain administrative account or system account to run this if available.

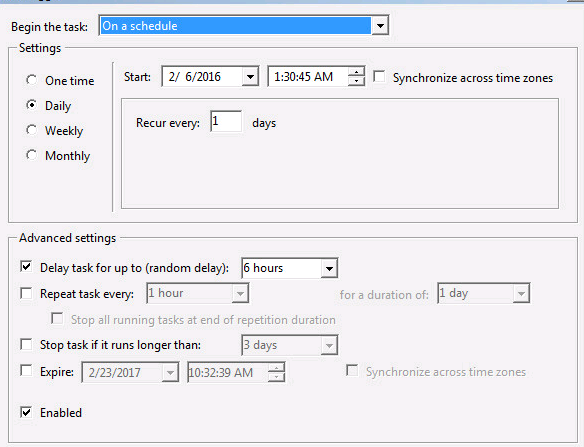

The next decision to make is when to have sDelete execute. Since it is a write-intensive operation, we highly recommend running it over a long random interval at night or the weekend so that it will not impact the bulk of your production PVS users during the workday. For this test, we set it to run daily starting at 1:30AM and for it to start randomly on the 2,500 desktops over a 6 hour interval. For deployments close to this size or larger I would recommend starting it earlier and running the random interval for no less than 9 hours in order to spread the tasks/writes out (again, please test this out at a small scale first to see how it performs in your environment!) and would definitely make sure that any other updates or virus scans are not scheduled over the same interval. However, you likely would only want to run this weekly unless your users are constantly logging in and out of their desktops. Note that we set our Delivery Group power

policy to have all 2500 desktops powered on during this window.

The following setting to make is the command to run sDelete itself. We found it easiest to copy the exe itself (available for download here) to the local C: drive of the vDisk.

Here is a description of the above flags:

/z: Zero free space

/r: Recursively run through the entire D:\ partition

/accepteula: sDelete will prompt with a EULA that needs to be clicked-through if this isn’t set

D: The drive letter of the Write-Cache partition that we are cleaning up

We left all options under the Conditions tab unchecked since the VMs will always be on AC-power and always be powered on.

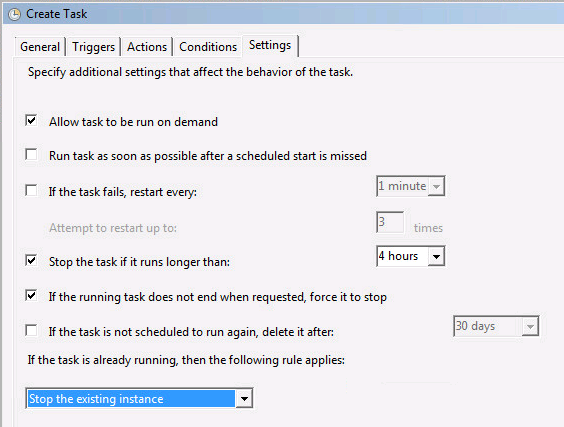

Lastly, under ‘Settings’ we made the following selections:

With the task setup and active, we then promoted the vDisk from maintenance to production and ensured that all 2500 desktops were using that version before calling it a day.

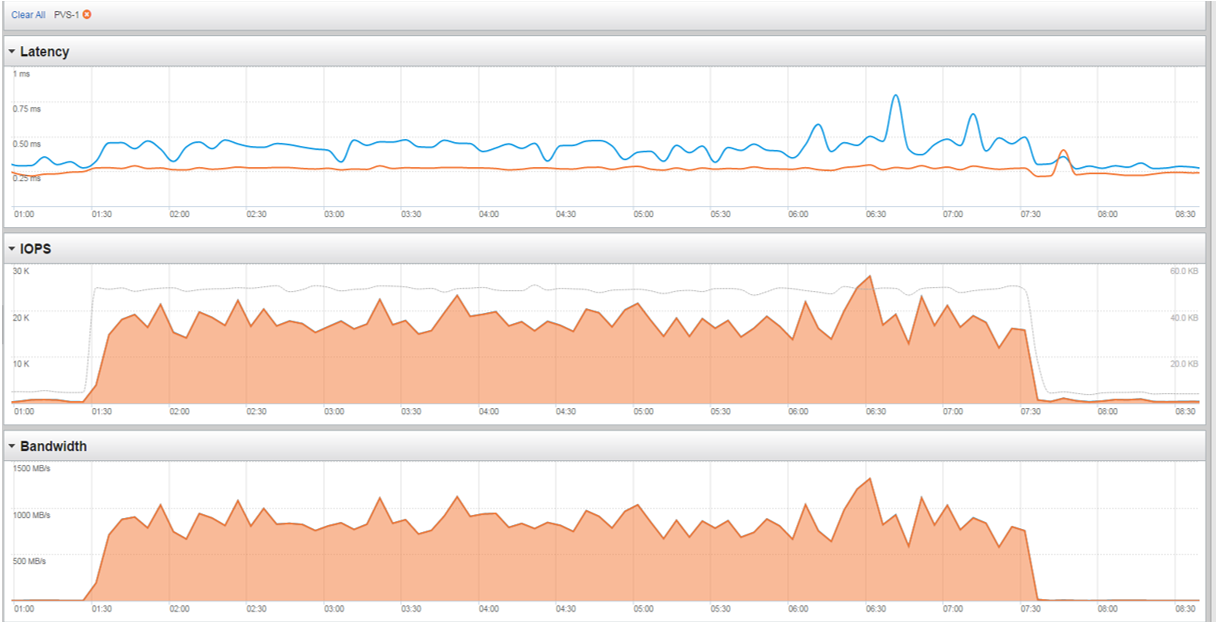

Coming in the next morning we can see that it ran successfully based upon the performance characteristics of our datastore for the time interval specified in the scheduled task:

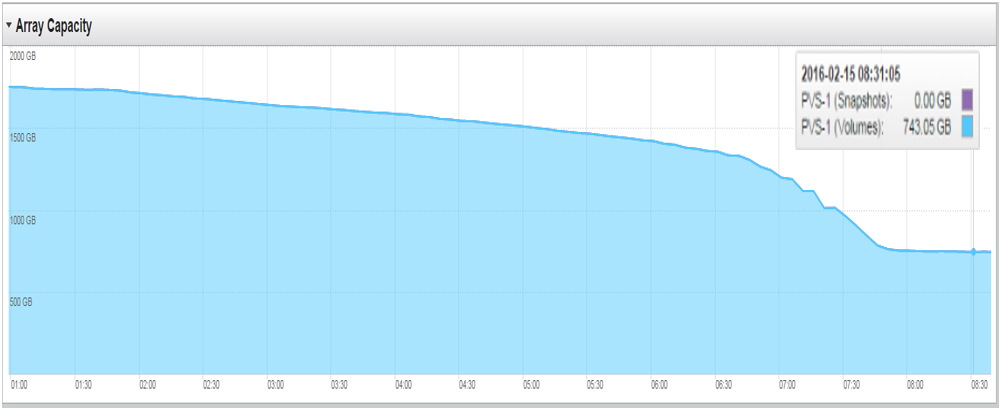

Finally, let’s take a look at our capacity during this same time interval to see how much space we were able to reclaim:

As we can see in the top-right, we are now only using 743.05 GB on this volume compared to 1.7TB the night before. That’s nearly 1TB reclaimed overnight!

Hopefully this experiment has helped to shed some light on the importance of using sDelete in certain circumstances for your PVS environment. This can easily be augmented as well to be used in an MCS deployment with minimal changes. If you are interested in further information on using Citrix XenDesktop PVS as well as MCS on Pure Storage I highly recommend checking out our FlashStack Converged Infrastructure Design Guide here.

Pure Storage and the “P” logo are trademarks or registered trademarks of Pure Storage, Inc. All other trademarks or names referenced in this document are the property of their respective owners.