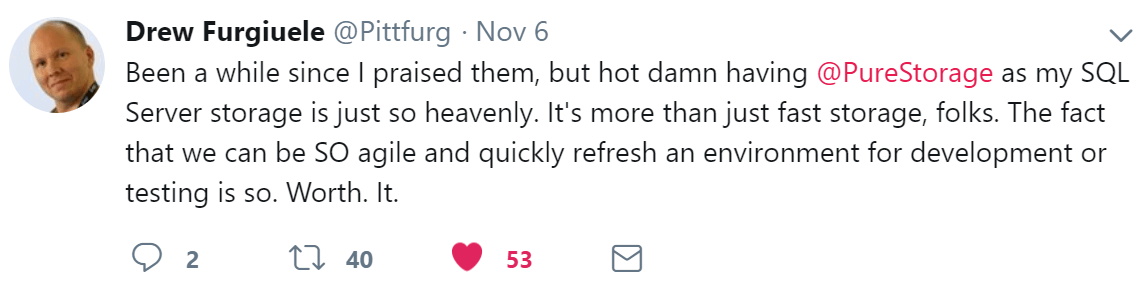

There are a number of things that we at Pure Storage are justifiably proud of, such as; our industry leading NPS score, which is externally certified by Satmetrix. Pure is a leader in the Gartner magic quadrant for all flash arrays, democratizing NVMe flash storage for the masses and disrupting the storage industry in general. But the one thing we value above all else is when our customers articulate the experience they enjoy when owning our products, as one such customer did via Twitter here:

The comment above alludes to one of the most popular uses cases for SQL Server on FlashArray™. The process of taking snapshots to create or refresh environments is not only fast and agile, but it can also be integrated into any manner of workflows via our PowerShell and Python software development kits. In fact, we have customers whose storage arrays see vastly more activity instigated via scripts than by human IT staff, and this is a growing trend.

Why Our Customers Like The Pure Experience So Much

The reason we are held in such high esteem by our customers goes beyond this one data service. A message we consistently receive from our customers, irrespective of their size, is that they love the ease of use that comes with our products. Simplicity and ease of use is a mantra at Pure and built into the DNA of our engineering teams. This ease of use allows our customers to switch from the legacy stance of being on the back foot and having to manage storage that is complex and cumbersome. In contrast, with Pure our customers step forward and enjoy a simple, powerful, and reliable storage experience which leads to feedback such as “It simply just works”.

Releasing The Shackles Of Legacy Storage That Are Holding You Back

As we roll out new features, our customers have found that we are sticking to our core values and principles of simplicity and ease of use. If the legacy storage platforms your organisation runs SQL Server workloads on are holding you back, take a look at Pure:

| Legacy Storage Problem | How Pure solves This |

| RAID levels, tiering, storage controller cache configuration, tuning and tweaking. | FlashArray does away with having to worry about RAID levels, tiering, controller cache configuration and any type of tuning. |

Data encryption at rest requiring that database administrators implement transparent database encryption.Data encryption at rest requires no database administrator effort on FlashArray. This is handled by the array which also rotates encryption keys once every 24 hours.A lack of integration with popular automation tools.FlashArray furnishes support for Puppet, Ansible, SaltStack, vRealize orchestrator. Any tool or platform that can call PowerShell, Python or a REST API can be used to automate FlashArray management.Data migrations every time the storage platform is changed.Many of Pure’s customers have traversed several generations of FlashArray without ever having to undergo a data migration. One of Pure’s founding principles was to do away with forklift-style rip and replace upgrades.Complex and cumbersome to manage storage replication solutions required for Failover clusters with nodes in different data centers.

ActiveCluster for FlashArray is by design the industry’s easiest to manage storage replication solution.

Slow database restoration times that do not satisfy a businesses recovery time objectives.Pure’s FlashBlade™ is a proven rapid-restore solution in this problem domain.A lack of insight into the virtualized platforms that SQL Server runs on.

Pure provides VMware analytics via Pure 1®.

Complex and lacking integration for ‘Newstack’ technologies that SQL Server can now run on.Pure provide storage integration for both Docker and Kubernetes . . . making them incredibly simple to use.

SQL 101: We Are Here To Help, Lets Talk

If you would love simple, reliable, fast storage to service your SQL Server workloads including backups and restores, then we would very much like to have a conversation with you. And this is not only on-premises, but now also in the public cloud.