I’d like to dive right into it, but before going too deep, I want to set the stage a bit first and review the flash storage journey, and how we got to this point.

At Pure, we’ve been proud of replacing high-speed spinning drives with flash in tier 1 environments over the last ten years (ten years! Can you believe it?) but we’ve noticed in all of that time that there’s been a bit of an orphan in this space. What about workloads that don’t require ultra-high performance and low-microsecond latency? These secondary workloads still reside on storage arrays that are plagued with spinning hard drives – the slow variety. Many of these arrays utilize a slow spinning hard drive in conjunction with flash (either via SSDs or PCIe cards using NAND flash) in an attempted effort to provide benefits of flash-based storage in tandem with the low $/Gigabyte(GB) economics that spinning drives bring to the table. These storage arrays are commonly referred to as “hybrid storage.”

There have been startups that took the idea from legacy storage vendors and improved on the caching technology, along with exposing as much of the throughput capability possible from a SAS/SATA-attached spinning hard drive, typically 7K in speed. It was an interesting idea for its time, but when hybrid was at its height, circa 2015 to 2017, flash media was somewhat expensive to fully populate a storage array. Hybrid storage was more of a stop-gap between legacy architectures and the destination of an all-flash data center. At Pure, we began with the goal of providing a modern, all-flash, data storage experience, and didn’t take the easy route of mixing flash with spinning media.

Beyond architecture, it’s important to understand what customers determine to be tier 2 within their organization. And what, historically, needed to be on a storage array that isn’t all-flash. That determination can vary from one company to another. After speaking with many customers, we identified a number of workloads that share common requirement traits. They include tier 2 VM farms, dev/test, QA, disaster recovery, snapshot offload, and lower-tier database workloads. Up to this point, system administrators have been forced to put these, and other workloads, on hybrid storage arrays. This was due, in large part, to the still higher cost per GB of all-flash – to which many have moved towards for their tier 1 workloads.

We can all agree that certain hybrid storage arrays have come a long way with regard to performance and density. However, users still have to overcome issues of cache misses, hot spots, and legacy operational headaches, such as RAID groups, pools, aggregates, 3-5 year forklift upgrades, and more. If that isn’t enough of a headache, they are also limited to the largest common 7K spinning hard drives, which currently sit at 10-12TBs in size. To be honest, I’d be hesitant to use any spinning drive that large. The rebuild times alone are enough to make most storage administrators break out in a cold sweat. As a result, environments that require a lot of capacity must populate their hybrid storage arrays with many hard drives (seriously, a lot – over 300 to get to 5PB of effective capacity!) to fulfill both the capacity and performance needs of the customer. This doesn’t even account for the high failure rates of the drives, and the complex calculations of the flash, whether it’s SSD or PCIe flash, to HDD ratios in order to minimize the problems of cache misses.

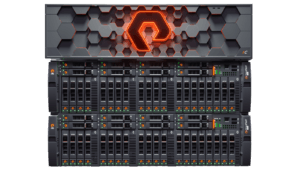

Enter an all-flash storage array for tier 2 workloads. We have developed what we believe to be the world’s first capacity-optimized all-flash storage array: the FlashArray™//C. The differentiation of FlashArray//C and FlashArray//X is in performance and cost. We are by no means compromising on tier-1 enterprise features such as availability, resilience, efficiency, and snapshots. Typically tier-1 all-flash storage arrays are tuned and focused on driving the lowest latency possible, dedicating most of the system resources to ensure that’s true. Capacity-optimized means that we have tuned the storage array to address not only greater overall capacity, but also higher density per DirectFlash™ Module. As a result, the average latency of FlashArray//C is in the 2-4ms range, as opposed to the very low microsecond latency of the FlashArray//X. Even so, FlashArray//C’s latency still blows most hybrid storage arrays right out of the water.

The new DirectFlash™ Modules maintain full NVMe connectivity and, thanks to our DirectFlash technology, our operating system, Purity//FA, is able to communicate directly to the raw NAND. This allows us to better utilize new types of dense media, including QLC. Most storage array vendors in the market are not able to effectively utilize QLC, due to its lower performance and resiliency. They will have a very difficult time trying to make QLC work. This is based upon the way their legacy architectures utilize flash, and the “off the shelf” QLC SSD form-factor they leverage. They simply don’t have the technology to directly communicate with the NAND the way Pure can with DirectFlash. So, if a storage manufacturer informs you they don’t use QLC because it’s not reliable enough, too slow, or the cost difference between QLC and TLC isn’t that big, it’s due to complications with the architecture they’ve chosen. Storage manufacturers need to try and justify the price advantage with the requirement of over-provisioning in order to utilize the SSDs. As a result of the over-provisioning the price advantage, for them, is negligible. Pure’s DirectFlash technology allows us to maintain the price advantage since we’re not required to overprovision in order to provide reliable and performant QLC flash storage.

The new FlashArray//C currently offers three ultra-dense capacity options: 1.3PB, 3.2PB, and 5.2PB effective, in 3U, 6U, and 9U sizes respectively. The //C60 controller is tuned to provide consistent low latency while addressing high and dense capacity – all while providing $/GB economics that customers would expect from a hybrid storage array. Users can expect consistent 2-4ms of latency, without the worries or management of storage-tiering and caching. The //C array provides the rich data services Pure FlashArray customers have come to enjoy, such as variable-block deduplication, compression, snapshots and clones, rich API integration, replication, cloud integration, and the earth-shaking disruption of Evergreen – Free Every Three, Capacity Consolidation, Love Your Storage, and more!

The new FlashArray//C currently offers three ultra-dense capacity options: 1.3PB, 3.2PB, and 5.2PB effective, in 3U, 6U, and 9U sizes respectively. The //C60 controller is tuned to provide consistent low latency while addressing high and dense capacity – all while providing $/GB economics that customers would expect from a hybrid storage array. Users can expect consistent 2-4ms of latency, without the worries or management of storage-tiering and caching. The //C array provides the rich data services Pure FlashArray customers have come to enjoy, such as variable-block deduplication, compression, snapshots and clones, rich API integration, replication, cloud integration, and the earth-shaking disruption of Evergreen – Free Every Three, Capacity Consolidation, Love Your Storage, and more!

We encourage you to leverage our new Customer Solution Center to do a live demo or POC and see the //C in action with your workload or any simulated workload of your choice. For additional information contact your Pure Storage value-added-partner, account team, or check out FlashArray//C, and the FlashArray//C Datasheet.