Oracle ASM always stripes and mirrors the data across all disks in a disk group when the redundancy mode is either NORMAL or HIGH and does only stripping when it is in EXTERNAL mode. Often times, we get question about whether customer can migrate their Oracle data out of their current storage to Pure Storage® when the ASM diskgroup is in EXTERNAL redundancy mode.

The answer is Yes, it can and can be done online.

This post illustrates the ASM migration on diskgroup with EXTERNAL redundancy.

ASM migration on diskgroup

Test environment: Oracle 12c on VM with ASM along with ASMlib.

Test sequence

- Create two pure storage volumes, attach to the ESX host, rescan the storage.

- Create a new datastore out of the first volume.

- Provision a VMFS disk out of the datastore and attach to the VM as a new disk.

- Using ASMlib perform a createdisk to update the header of the disk as ASM disk.

- Create ASM diskgroup with external redundancy on this disk.

- Setup Oracle database on that disk group

- Setup SLOB & run load

- Add a new RDM disk using the second volume that was attached to the ESX and attach to the VM.

- Using ASMlib perform a createdisk to update the header of the disk as ASM disk on the partition.

- Attach the disk to the diskgroup and rebalance it and remove the other disk with the same command

- Validate the database

For brevity, will start after sequence#3, where the VMFS disk attached to the Oracle VM as /dev/sdc and we have created a partition using fdisk.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 |

[root@orasmprod ]oracleasm createdisk DATA1 /dev/sdc1 Writing disk header: done Instantiating disk: done SYS@+ASM> @asmdisk DISK_NUMBER HEADER_STATU Total GB Free GB PATH —————– —————— —————– —————– ——————————————— 0 PROVISIONED .00 .00 /dev/oracleasm/disks/DATA1 0 MEMBER 4.00 3.94 /dev/oracleasm/disks/ASMDATA SYS@+ASM> create diskgroup DATA external redundancy 2 disk ‘/dev/oracleasm/disks/DATA1’ name DATA1 3 attribute ‘compatible.asm’=‘12.1’; Diskgroup created. |

At this point we installed SLOB on the PROD database using the +DATA diskgroup and started the SLOB run with 8 users and let it run throughout the following steps. Assuming this is the current setup where a customer has databases running on their current storage, we moved ahead with sequence #8, where we added the second volume as RDM disk to the VM as /dev/sdd to mimic adding new volume from Pure storage. Followed the same procedure with creating a partition and invoked oracleasm to stamp the header of the disk.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

[root@orasmprod ]# oracleasm createdisk DATA2 /dev/sdd1 Writing disk header: done Instantiating disk: done SYS@+ASM> @asmdisk DISK_NUMBER HEADER_STATU Total GB Free GB PATH —————– —————— —————– —————– ——————————————— 0 PROVISIONED .00 .00 /dev/oracleasm/disks/DATA2 0 MEMBER 4.00 3.94 /dev/oracleasm/disks/ASMDATA 0 MEMBER 50.00 18.14 /dev/oracleasm/disks/DATA1 SYS@+ASM> @asmdg Block Group N Path Disk Name Size AU STATE ———– —————————————— —————— ——– —— —————– ASMDATA /dev/oracleasm/disks/ASMDATA ASMDATA_0000 4096 1 MOUNTED DATA /dev/oracleasm/disks/DATA1 DATA1 4096 1 MOUNTED SYS@PROD> select name from v$datafile; NAME ———————————————————————————————— +DATA/prod/system01.dbf +DATA/prod/sysaux01.dbf +DATA/prod/undotbs01.dbf +DATA/PROD/DATAFILE/iops.266.919089197 +DATA/prod/users01.dbf |

At this time, we went ahead and issued the alter diskgroup command to add the new disk DATA2 and drop the old disk DATA1 along with rebalance option.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

SYS@+ASM> alter diskgroup DATA add disk ‘/dev/oracleasm/disks/DATA2’ name NEW_DATA1 drop disk DATA1 rebalance power 16 wait; Diskgroup altered. Elapsed: 00:00:39.32 SYS@+ASM> @asmdg Block Group N Path Disk Name Size AU STATE ———– —————————————— —————— ——– —— —————– ASMDATA /dev/oracleasm/disks/ASMDATA ASMDATA_0000 4096 1 MOUNTED DATA /dev/oracleasm/disks/DATA2 NEW_DATA1 4096 1 MOUNTED SYS@+ASM> @asmdisk DISK_NUMBER HEADER_STATU Total GB Free GB Path —————– —————— —————– —————– ——————————————— 0 FORMER .00 .00 /dev/oracleasm/disks/DATA1 0 MEMBER 4.00 3.94 /dev/oracleasm/disks/ASMDATA 1 MEMBER 50.00 16.48 /dev/oracleasm/disks/DATA2 |

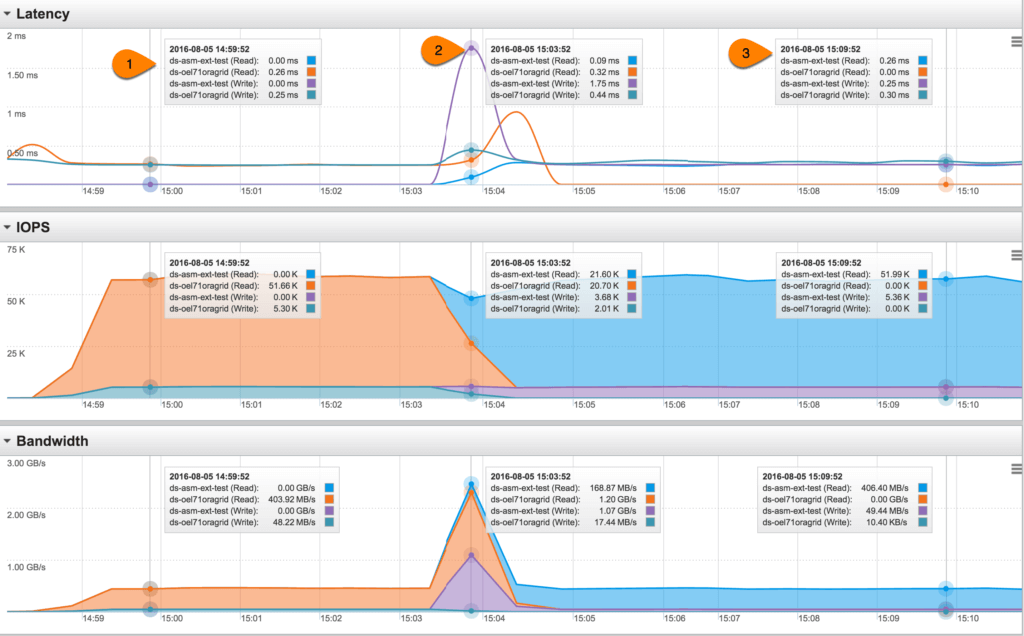

All this time the SLOB kept running without any issues. As a matter of fact the Pure Storage GUI below shows the best part of how the performance remained same before and after the disk addition/removal.

1) SLOB was running on the diskgroup with DISK1 from the VMFS (ds-oel71oragrid) and performing over 51K read IOPS, 5K write IOPS with latencies under 0.26ms and total bandwidth over 450MBps.

2) This is the time when the alter diskgroup command was executed where the new RDM disk (ds-asm-ext-test) was added to the diskgroup while the prior disk was removed and rebalance performed. As you can see on the Bandwidth section, more data was read from the old disk and they were written onto the new disk (in the order of 1 GBps).

3) At this time, SLOB is running on the diskgroup with DISK2 from the RDM and performing at similar performance metrics as it was earlier.

ASM is one of the best feature Oracle has ever provided (at no cost) and it enables seamless migration whether it is NORMAL or EXTERNAL redundancy.

ASM Migration Scripts

|

1 2 3 4 5 6 7 8 9 10 |

rem rem asmdisk.sql rem set pages 100 set lines 132 col path format a30 col tg format 999,999.99 heading ‘Total GB’ col fg format 999,999.99 heading ‘Free GB’ select disk_number, header_status, total_mb/1024 tg, free_mb/1024 fg, path from v$asm_disk order by group_number, disk_number; |

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 |

rem rem asmdg.sql rem set pages 1000 set term off col mp new_value pname col dp new_value dname col gp new_value gname select ‘a’||max(length(path)) mp, ‘a’||max(length(name)) dp from v$asm_disk; select ‘a’||max(length(name)) gp from v$asm_diskgroup; set term on col path form &&pname heading “Path” col diskname form &&dname heading “Disk Name” col groupname form &&gname heading “Group Name” col sector_size form 99999 heading “Sector|Size” col block_size form 9999 heading “Block|Size” col total_gig form 9,999,999 heading “Group|Total|GB” col free_gig form 9,999,999 heading “Group|Free|GB” col dtotal_gig form 9,999,999 heading “Disk|Total|GB” col dfree_gig form 9,999,999 heading “Disk|Free|GB” col au form 999 heading “AU” col failgroup format a15 heading ‘Fail Group’ set lines 150 break on groupname on total_gig on free_gig skip 1 SELECT g.name groupname, d.path, d.name diskname, g.block_size, g.allocation_unit_size/1024/1024 au, g.state FROM v$asm_diskgroup g, v$asm_disk d WHERE d.group_number = g.group_number ORDER BY g.name, d.disk_number; clear breaks |