In this blog, I discuss Veeam’s SAN mode configuration which delivers optimal data transport. However, ensuring that SAN mode is utilized can be sometimes challenging. I will provide details about the SAN mode setup and how to avoid common pitfalls.

Before we jump into configuration let us see what is SAN mode and NBD(Network) mode.

Direct SAN

Direct SAN access transport mode is best suited for Virtual Machines with disks located on shared VMFS volumes which are connected to ESXi hosts over iSCSI or FC protocols. In this case, Veeam uses VMware’s VADP (VMware vSphere Storage APIs – Data Protection) to move the VM data (vmdk files) directly from and to FC and iSCSI shared storage over the SAN. VM data moves through the SAN, circumventing ESXi hosts and the LAN. See Figure 1.)

Figure 1.

Network Mode

In Network mode, data is transferred via the ESXi host over LAN using the Network Block Device protocol (NBD). The network mode is not recommended due to potential network bandwidth constraints and utilization resulting in low data transfer speeds extending backup and restore times.

SAN And Network Mode Tests

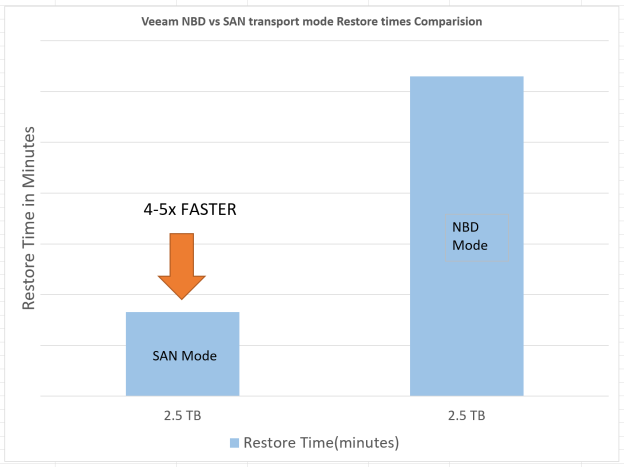

We performed performance testing with Veeam version 9.5 by backing up and restoring a single large virtual machine with direct SAN and network modes.

The test results are shown below. Backing up the same virtual machine over SAN resulted in 4 to 5 times faster job processing speed as compared to network mode.

Figure 2

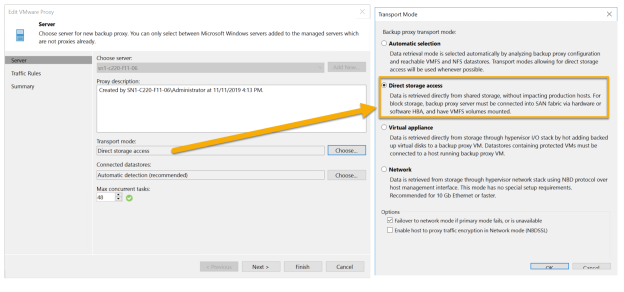

Configuring Direct SAN Access Mode for Backup

In order to use the Direct SAN access mode, make sure that the following requirements are met:

- In order to get the best performance, always use a physical backup proxy in Direct SAN access mode. If your backup proxy is a VM, performance may not be optimal. Please see below:

Figure 5

- A backup proxy using the Direct SAN access mode must have direct access to the production storage via a hardware or software HBA. It is highly recommended to use Pure Storage snapshots for backups. FlashArray

snapshots are instantaneous and do not stun the VM(slow down the VM). See the advanced settings for the backup job below (Figure 6), to ensure the backup job uses storage snapshots for backups.

snapshots are instantaneous and do not stun the VM(slow down the VM). See the advanced settings for the backup job below (Figure 6), to ensure the backup job uses storage snapshots for backups.

Figure 6.

Configuration Direct SAN Access Mode for Restore

- The VMware datastores underneath the SAN volumes have to be presented to the proxy server OS in offline mode. If initialized it may cause the VMFS file system to be overwritten by NTFS which can corrupt the data. See Figure 7 for an example of a data store’s SAN volume presented to Veeam proxy’s OS as an Offline volume.

Figure 7.

- The VMware datastore disk presented to the OS (Step 3) should have write access. Sometimes the backup or restore job will switch to NBD mode or will fail with the following error during restore job:

Restore job failed Error: VDDK error: 16000 (One of the parameters supplied is invalid).

To solve this problem clear the read-only attributes of the disk using diskpart.

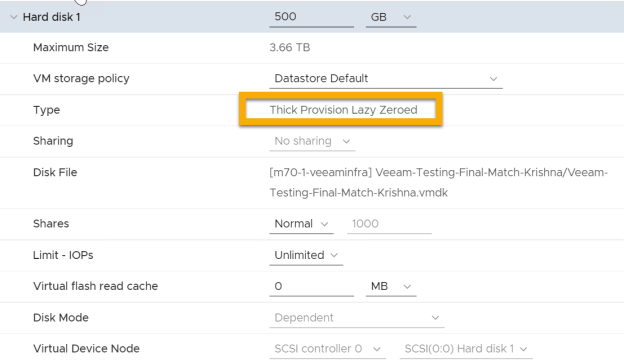

- Only “Thick Provision Lazy Zeroed” and “ThickProvision Eager Zeroed” VM disks can be used in Direct SAN mode. See Figure 8.

Figure 8.

- SAN access mode cannot be used for incremental restore due to VMware limitations. Disable the CBT for VM virtual disks for the duration of the restore process by setting ctkEnabled to False in Advanced VM configuration parameters. See Figure 9.

Figure 9.

Direct SAN Mode Limitations

Direct SAN access mode is not supported for VMs using vSAN. VVols based virtual machines are not supported either. In these cases, it is suggested to use Virtual appliance or network transport modes. If these limitations don’t apply we would highly recommend you SAN mode to get the best performance with Veeam software.

Summary

As we have seen SAN mode is the fastest transport mode available in Veeam. By utilizing high bandwidth in SAN and FlashArray instantaneous volume snapshots, and avoiding transferring data via ESXi host, the backup and restore window can be significantly shortened, resulting in improved recovery time objective (RTO).