The public cloud provides a rich eco-system of platforms and tools that are incredibly easy to consume. Pure Storage™ provides the industry’s leading storage platforms designed specifically for flash storage from the ground up. What if you could leverage the strengths of the public cloud and Pure Storage’s industry leading storage platforms simultaneously?

Pure Storage has already announced Purity CloudSnap™ and Cloud Block Store on Amazon AWS, with support for other public cloud providers to follow. This post covers an innovative solution that leverages both the Azure cloud and a FlashArray™ on premises to implement a continuous integration build pipeline.

Continuous Integration Pipelines for The Microsoft Stack

Team Foundation Server is a popular choice for a continuous integration (CI) platform for developers and teams using Microsoft .NET, Azure and SQL Server. The original Azure incarnation of this CI platform was Visual Studio Team Foundation Services, colloquially referred to as VSTS. In 2018 VSTS became Azure DevOps. The element of Azure DevOps that encapsulates CI pipeline functionality: Azure Pipelines introduces a key change in the form of the ability to specify build pipelines in a YAML format. And the good news does not stop here, build pipeline YAML declarations are stored in the same source code repositories as the code they build.

Basic Azure Pipelines Architecture

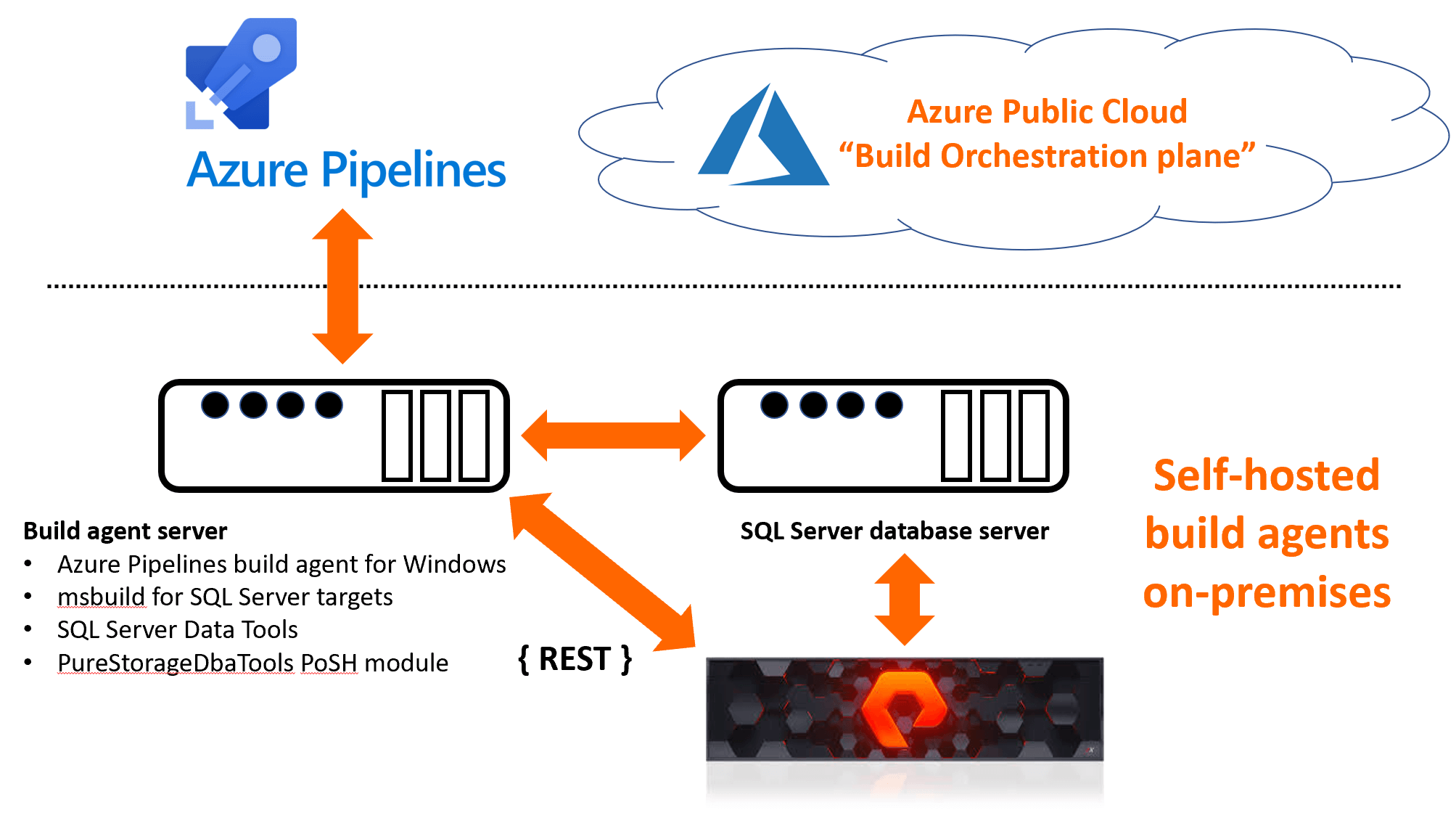

“Build agents” check code out from a source code repository, create deploy-able artifacts to deploy somewhere for testing, they can either be:

- hosted, i.e. they run in the Microsoft Azure cloud,

- self-hosted, meaning that they run on-premises.

In the latter case Azure Pipelines acts as the continuous integration orchestration plane for build agents that run on-premises. Communication between Azure Pipelines and self-hosted agents is via secure HTTP and access tokens.

Our Example Build Pipeline

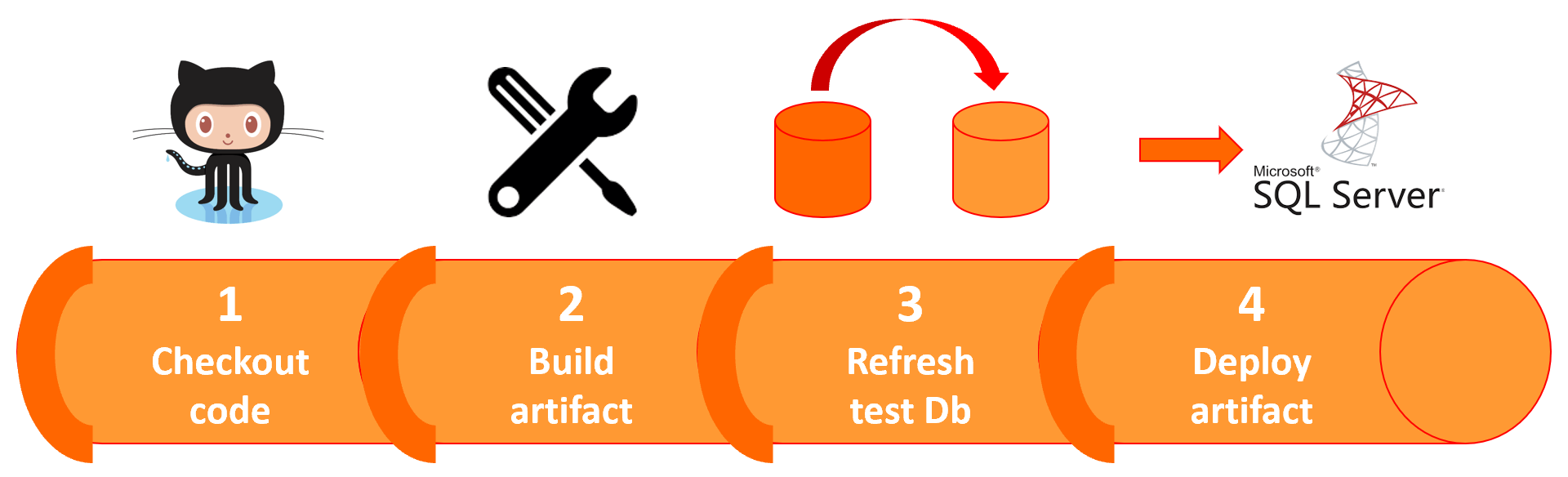

This pipeline will be used to illustrate this best-of-both-worlds approach to continuous integration:

The pipeline carries out the following functions:

- Checks a SQL Server data tools project out of GitHub,

- Uses msbuild to create a deploy-able artifact called a Data-tier application Package (or “DACPAC” in SQL Server DBA parlance) from that project,

- Invokes a PowerShell function to refresh a test database from a production database,

- Uses sqlpackage.exe to deploy the DACPAC to the SQL Server test database.

Build Environment Topology

The README for the GitHub repository associated with this pipeline provides full and detailed instruction for setting the pipeline up.

Azure Pipelines Pipeline-As-Code

The YAML that defines the build pipeline can be found on GitHub here, below is an excerpt of what it looks like:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 |

# # Example Azure DevOps pipeline to: # # 1. Checkout a SQL Server data tools project # 2. Build the project into a DACPAC # 3. Refresh a development database from pseudo production, this is carried out by # a call to the Invoke-PfaDbRefresh function from the PureStorageDbaTools PoSH module # 4. Apply the DACPAC to the development database # trigger: - master pool: $(agentPool) steps: - task: MSBuild@1 displayName: 'Build DACPAC' inputs: solution: 'AzureDevOps-Fa-Snapshot-CI-Pipeline.sln' msbuildArguments: '/property:OutDir=bin\Release' # Create a secret variable - powershell: | $securePassword = ConvertTo-SecureString -String '$(pfaPassword)' -AsPlainText -Force $pfaCreds = New-Object System.Management.Automation.PSCredential '$(pfaUsername)', $securePassword Invoke-PfaDbRefresh -RefreshDatabase $(refreshDatabase) ` -RefreshSource $(refreshSource) ` -DestSqlInstance $(refreshTarget) ` -PfaEndpoint $(pfaEndpoint) ` -PfaCredentials $pfaCreds - script: sqlpackage.exe /Action:Publish /SourceFile:"$(System.DefaultWorkingDirectory)\AzureDevOps-Fa-Snapshot-Ci-Pipeline\bin\Release\AzureDevOps-Fa-Snapshot-Ci-Pipeline.dacpac" /TargetConnectionString:"server=$(refreshTarget);database=$(refreshDatabase)" displayName: 'Apply DACPAC' |

Lines 25 through to 29 in the YAML is where the database refresh magic takes place, courtesy of the

Invoke-PfaDbRefresh function, which is a member of the freely available PureStorageDbaTools module.

The Power of FlashArray Snapshots

What makes FlashArray snapshots special ?:

- FlashArray does not require any complex and separately licensed software to perform database refreshes,

- There is no performance impact on source databases when performing database refreshes. And this includes the most mission and performance critical of source databases,

- Snapshots are immutable and not dependent on other snapshots. Multiple snapshots of a volume can be taken and when a snapshot is removed, this has zero impact on the rest,

- FlashArray’s metadata engine is not constrained by storage controller memory,

- FlashArray uses redirect-on-write snapshots. As per Snapshot 101: Copy-on-write vs Redirect-on-write by StorageSwiss.com, redirect-on-write snapshots are superior to their copy-on-write counterparts in a number of key areas. No computational overhead is incurred when reading snapshots that leverage the redirect-on-write system. Secondly, the number of IO operations required when modifying a protected block is 1/3 of that incurred when using a copy-on-write system. And lastly, to quote the article directly:

The more snapshots are created and the longer they are stored, the greater the impact to performance on the protected entity.

The Good News

This solution is just a mere taste of the power and flexibility that FlashArray and the PureStorageDbaTools PowerShell module can provide. More advanced continuous integration workflows might include:

- Refreshing multiple target databases in parallel,

- Building data obfuscation and masking into the CI workflow via the ability of the PureStorageDbaTools module to leverage SQL Server dynamic data masking.

Continuous integration pipeline performance is critical when it comes to time to market for the delivery of new software-based products and services. If your organisation would like to perform continuous integration at the speed of flash, Pure Storage is here to help.

Further Reading

Azure Pipeline with FlashArray Database Refresh GitHub repo

Empowering SQL Server DBAs Via Snapshots and PowerShell blog post

PureStorageDbaTools module on PowerShell gallery