Streamlining Azure VMware Solution: Automating Pure Cloud Block Store Expansion

This blog post explores the seamless integration of Azure Functions for managing Azure VMware...

Read PostA wealth of technical detail for working with Pure products.

This blog post explores the seamless integration of Azure Functions for managing Azure VMware...

Read PostData sprawl is a struggle storage admins know all too well. Learn how the...

Read PostIn part three of our DevSecOps series, we delve into static and dynamic code...

Read PostThe commonly accepted measure of performance for storage systems has long been IOPS, but...

Read PostAt a Pure Storage Hackathon, Martin Vich explored how to limit data traffic to...

Read PostLearn how you can make your cloud storage bill cheaper without compromising speed ...

Read PostLearn how to keep your storage current without the constant need to use more...

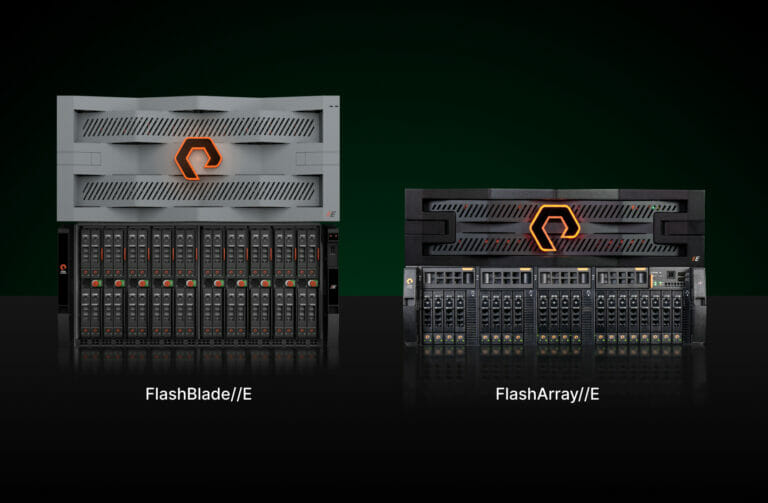

Read PostFlashArray//E, the newest addition to the Pure//E family, further extends the energy savings and...

Read PostWith VMFS-6, space reclamation is now an automatic, but asynchronous process. This is great...

Read PostWe conducted testing in our lab to validate that SQreamDB software functionally works with...

Read PostIn Linux, ASMLib provides the wrapper for managing the ASM disk. Here's an example run...

Read PostScaling Pure Cloud Block Store capacity is becoming even simpler with an on-demand, self-service,...

Read PostMiranda Steele, on our REST API and FlashArray programmability team, shares her deep knowledge...

Read PostThe difference between Pure Storage AIRI and other solutions is of course the Pure Storage components of the stack and our top-ranked Pure Storage customer experience.Senior technology, product and solutions marketing leader

In the next installment in our series, we look at some of the advantages...

Read PostPart 2 of our three-part blog series shows how to take data residing on...

Read PostThis blog post walks you through the simple steps to self-onboard an Azure VMware...

Read PostIn the third installment in a series on Azure VMware Solution and Pure Cloud...

Read Post