As discussed in my previous blogs, it is our opinion that using 100% non-reducible data for datasets and workloads when conducting a synthetic performance test on a data-reduction storage array is methodologically unsound.

It’s akin to testing the performance of an industrial waste compactor by filling it with steel ball bearings. Perhaps an interesting corner-case test, but essentially a waste of time.

Our experience with many customers is that almost all datasets and workloads are reducible by a Pure Storage FlashArray. This is reflected in our continually updated Flash Reduce Ticker.

The whole point of testing the performance of a data-reduction storage array, is to test it while at the same time testing how well it reduces your data, or a defensible approximation of your data, under load.

To illustrate this, I ran two series of performance tests on a Pure Storage FA-405.

The first series of tests was using non-reducible workloads against a non-reducible dataset. The second series of tests was using “Oracle vdbench 5.04.03 4/4k/4” data, (which reduces to about 5.2:1 on a Pure Storage FlashArray), for both the dataset and the workloads.

Our standard performance testing configuration consisting of 1 “command” VM and 8 “worker” VMs was used to run these scripts.

Oracle’s vdbench tool 5.04.03 was used.

For the non-reducible test, the FlashArray was filled to 50% with non-reducible data using these Oracle vdbench 5.04.03 parameters:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

<span style="color: #ff0000;">dedupratio=1 dedupunit=4k compratio=1</span> messagescan=no hd=default,user=root,shell=ssh,jvms=8 hd=vdb-001,system=vdb-001 hd=vdb-002,system=vdb-002 hd=vdb-003,system=vdb-003 hd=vdb-004,system=vdb-004 hd=vdb-005,system=vdb-005 hd=vdb-006,system=vdb-006 hd=vdb-007,system=vdb-007 hd=vdb-008,system=vdb-008 sd=default,openflags=directio,align=4k sd=sd1_$host,host=$host,lun=/dev/sdb sd=sd2_$host,host=$host,lun=/dev/sdc sd=sd3_$host,host=$host,lun=/dev/sdd sd=sd4_$host,host=$host,lun=/dev/sde wd=wd_default,sd=* wd=fill,sd=sd*,xfersize=(4k,3,8k,8,16k,11,32k,12,64k,19,128k,21,256k,26),rdpct=0,seekpct=eof rd=default rd=fill,wd=fill,iorate=max,interval=60,elapsed=172800,forthreads=8 |

The non-reducible IOPS ramp tests were done using these Oracle vdbench 5.04.03 parameters:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

<span style="color: #ff0000;">dedupratio=1 dedupunit=4k compratio=1</span> messagescan=no hd=default,user=root,shell=ssh,jvms=8 hd=vdb-001,system=vdb-001 hd=vdb-002,system=vdb-002 hd=vdb-003,system=vdb-003 hd=vdb-004,system=vdb-004 hd=vdb-005,system=vdb-005 hd=vdb-006,system=vdb-006 hd=vdb-007,system=vdb-007 hd=vdb-008,system=vdb-008 sd=default,openflags=directio,align=4k sd=sd1_$host,host=$host,lun=/dev/sdb sd=sd2_$host,host=$host,lun=/dev/sdc sd=sd3_$host,host=$host,lun=/dev/sdd sd=sd4_$host,host=$host,lun=/dev/sde wd=wd1,xfersize=(4k,9,8k,47,16k,21,32k,17,64k,6),seekpct=100,sd=* rd=default,curve=(30-99,3),iorate=curve,interval=5,warmup=5,elapsed=300,pause=0,maxdata=10t rd=rd1,wd=wd1,forrdpct=(10,80,35,65),forthreads=8 |

The “Oracle vdbench 5.04.03 4/4k/4” fill, (which reduces to about 5.2:1 on a Pure Storage FlashArray), was done using these Oracle vdbench 5.04.03 parameters:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

<span style="color: #ff0000;">dedupratio=4 dedupunit=4k compratio=4</span> messagescan=no hd=default,user=root,shell=ssh,jvms=8 hd=vdb-001,system=vdb-001 hd=vdb-002,system=vdb-002 hd=vdb-003,system=vdb-003 hd=vdb-004,system=vdb-004 hd=vdb-005,system=vdb-005 hd=vdb-006,system=vdb-006 hd=vdb-007,system=vdb-007 hd=vdb-008,system=vdb-008 sd=default,openflags=directio,align=4k sd=sd1_$host,host=$host,lun=/dev/sdb sd=sd2_$host,host=$host,lun=/dev/sdc sd=sd3_$host,host=$host,lun=/dev/sdd sd=sd4_$host,host=$host,lun=/dev/sde wd=wd_default,sd=* wd=fill,sd=sd*,xfersize=(4k,3,8k,8,16k,11,32k,12,64k,19,128k,21,256k,26),rdpct=0,seekpct=eof rd=default rd=fill,wd=fill,iorate=max,interval=60,elapsed=172800,forthreads=8 |

The “Oracle vdbench 5.04.03 4/4k/4” IOPS ramp tests were done using these Oracle vdbench 5.04.03 parameters:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

<span style="color: #ff0000;">dedupratio=4 dedupunit=4k compratio=4</span> messagescan=no hd=default,user=root,shell=ssh,jvms=8 hd=vdb-001,system=vdb-001 hd=vdb-002,system=vdb-002 hd=vdb-003,system=vdb-003 hd=vdb-004,system=vdb-004 hd=vdb-005,system=vdb-005 hd=vdb-006,system=vdb-006 hd=vdb-007,system=vdb-007 hd=vdb-008,system=vdb-008 sd=default,openflags=directio,align=4k sd=sd1_$host,host=$host,lun=/dev/sdb sd=sd2_$host,host=$host,lun=/dev/sdc sd=sd3_$host,host=$host,lun=/dev/sdd sd=sd4_$host,host=$host,lun=/dev/sde wd=wd1,xfersize=(4k,9,8k,47,16k,21,32k,17,64k,6),seekpct=100,sd=* rd=default,curve=(30-99,3),iorate=curve,interval=5,warmup=5,elapsed=300,pause=0,maxdata=10t rd=rd1,wd=wd1,forrdpct=(10,80,35,65),forthreads=8 |

On a Pure Storage FA-405 with 44 SSDs running Purity 4.5.0 it was observed that on average, as reported by Oracle vdbench, that “Oracle vdbench 5.04.03 4/4k/4” workloads, on the “Oracle vdbench 5.04.03 4/4k/4” dataset, resulted in…

|

1 2 3 4 |

- 66% more "10% uniform random read / 90% uniform random write" IOPS - 85% more "80% uniform random read / 20% uniform random write" IOPS - 63% more "35% uniform random read / 65% uniform random write" IOPS - 80% more "65% uniform random read / 35% uniform random write" IOPS |

… than “Oracle vdbench 5.04.03 1/4k/1” workloads, on the “Oracle vdbench 5.04.03 1/4k/1” dataset.

And remember that this increase in IOPS was while the Pure Storage FlashArray was delivering 520% better data reduction.

I don’t know how much this dataset/workloads combination will reduce on other data-reduction storage arrays, or how it will affect their maximum data throughput rates. However, when deciding which data-reduction storage array to deploy, our suggestion is that it is wise to find this out for yourself.

Our recommendation is that, whenever possible, test the performance of a data-reduction storage array using your actual workloads on your actual datasets. We have implemented the “Love Your Storage Guarantee” to make this practical.

However, if you decide to use synthetic tests, please consider using ones that utilize datasets and workloads that have reducibility rates similar to your actual data.

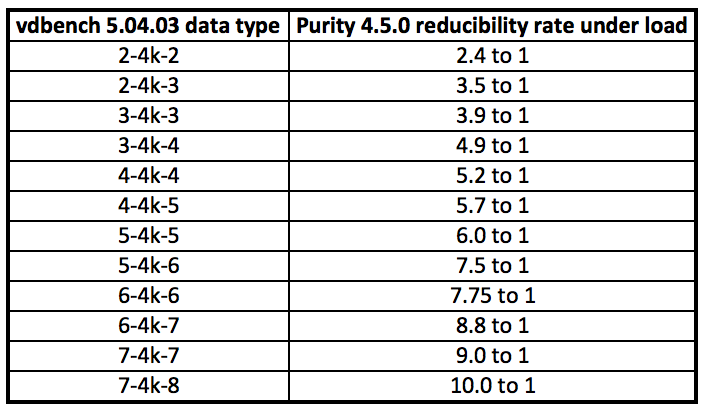

One way to figure out how to design your Oracle vdbench scripts is to:

– load a large sample of your data on to a Pure Storage FlashArray

– observe the reducibility rate under load as reported by Purity

– use the table below to alter your Oracle vdbench general parameters section

– test this script on all of the data-reduction storage arrays you are considering

Summary:

A Pure Storage FlashArray is designed to provide high data throughput, with low response times, while at the same time delivering industry-leading reducibility rates.

We invite you to test this for yourself.

It is our hope that this blog will help to convince you that if anyone suggests that a good way to run a performance test on a data-reduction storage array is to use a synthetic test that is designed to utilize all non-reducible data for the workloads and the dataset, that you will dismiss this as methodologically unsound.